Introduction:

Citrix NetScaler on Microsoft Azure has gone a long way from its early beginnings of single IP mode with no High Availability to Multi NIC/IP mode with Advanced High Availability all of which is driven by continuous Microsoft Azure enhancements to their underlying cloud infrastructure and services knowing that NetScaler on Azure is to some extent the same NetScaler we know on-premises.

NetScaler HA on Microsoft Azure has been a controversial subject due to the nature of how NetScaler networking requirements work with Azure infrastructure network architecture thus the many options of deployment and subsequently considerations, requirements, limitations, and prerequisites. I have been trying to document how NetScaler HA can be configured on Azure for some time now through these earlier blog posts:

Citrix NetScaler HA on Microsoft Azure “ The Multi NIC/IP Untold Truth ’’

Configuring Multiple VIPs for Citrix NetScaler VPX on Microsoft Azure ARM Cloud Guide

Configuring Active-Active Citrix NetScaler Load Balancing on Microsoft Azure Resource Manager

Configuring Multiple IP Addresses for Citrix NetScaler VPX on Microsoft Azure Resource Manager

Citrix NetScaler VM Bandwidth Sizing on Microsoft Azure

NetScaler HA on Microsoft Azure “Planned Maintenance”

As Azure keeps evolving, so are the ways NetScaler can be deployed including in High Availability Active-Active or Active-Passive mode, so in our last UAE Citrix User Group Community meeting, I conducted a hands-on presentation/demonstration on the various ways HA can be configured for NS on Azure including the latest HA Template Active-Passive considerations.

Many found it hard to grasp and so did I until recently, so I have decided to document the various ways discussed on top of demonstrate how to configure the HA template end-to-end because Citrix documentation/Reference Architecture for some reason cuts off in a critical stage where one needs to understand what are the next steps to establish services on this HA deployment.

Citrix NetScaler on Microsoft Azure Options:

Lets discuss in brief the various ways NetScaler can be configured on Azure with HA options and eventually move on to the hands-on demonstration of the recent published HA template with Active-Passive mode (INC & ALB DSR), kindly go through the previous blog posts mentioned to get a detailed description on some of the options below:

NetScaler VPX Single IP Mode

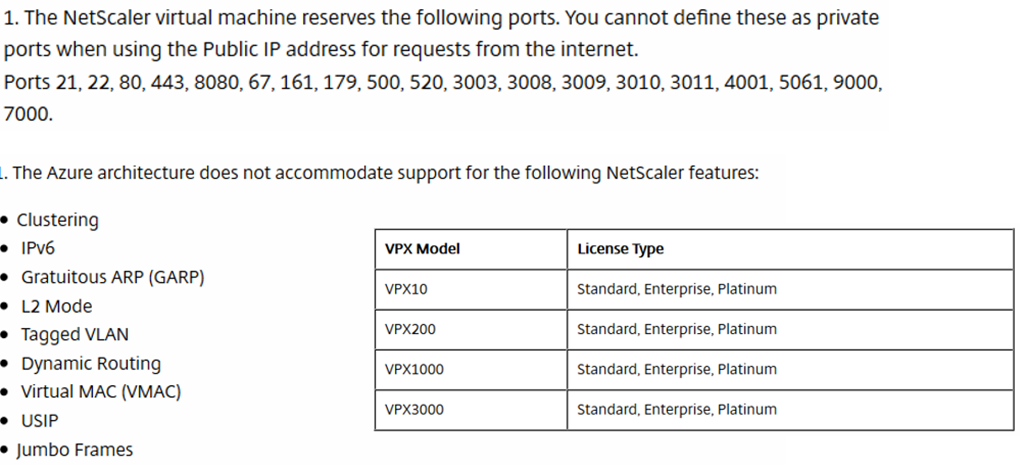

Before Microsoft Azure supported multi IPs/NICs on a single VM, NetScaler ran in single IP mode which means your subnet IP, NetScaler IP, and VIPs are all using one IP which is the primary IP assigned to the NIC attached to the NetScaler (since only on VM and a single IP could be assigned). In order to host services on the NS, the same IP is used with different ports to distinguish them.

This meant that If my NetScaler IP is 10.0.0.1 and I wanted to add an IIS 80 load balancer for example, I would assign it the same IP address but choose a different port such as 15001 . With Single IP mode, some well known ports are reserved to for NetScaler use so they cannot be assigned like 80 or 443.

The issue here was that users would have to access these services on their assigned ports such as http://10.0.0.1:15001 and that was unacceptable so that way around that was to front the NetScaler with an Azure load balancer not because we want load balancing (well we do but more on that later) but because Azure ALB is capable of PAT/NAT while Azure NSG “Network Security Group” is not. The ALB would have an IP and can use a well known port, 80 in our case, and would PAT to port 15001 to access the service.

Users are provided with the IP/Hostname of the Azure load balancer frontend IP with port 80 and the ALB will PAT this port to 15001 to the service hosted non NetScaler, which means that for every virtual server that is hosted on NetScaler, you needed an Azure ALB Frontend IP/ Backend Pool / and load balancing rule unless your users are fine with using the custom port assigned to that virtual server directly on NetScaler.

NetScaler VPX Single IP Mode HA

Same requirements apply when deploying NetScaler Single IP in High Availability mode on Azure as discussed above but with different considerations for this scenario. To cut the story short, SNIPs cannot float on Azure network because an IP can be statically assigned to an individual NIC which means that every NetScaler must have its own SNIP, and since these NS appliances are in single IP mode, the only option here is to have one NIC/IP per NS.

Though NetScaler documentation has been updated stating that Active-Passive is supported in this scenario, I do not see how that is possible unless it is Multi IP mode (even if that IP is the LB Frontend IP and INC/DSR is used) but Single NIC which yes would work but also has more considerations than stated (documentation is a disaster). For Single IP mode that being single NIC/IP, only Active-Active is supported. If you want to try Active-Passive, then refer to the NS HA Template description section and apply the same concept here but would have to be done manually and I have not tested it because it makes no sense to do that in Single IP mode.

Active-Active HA means that both NetScaler’s are configured independently with same exact configuration using different IP obviously and then Azure ALB is used to load balance requests between both NetScaler’s. I have documented the configuration required for this in an earlier post and the ALB configuration applies to single and Multi NIC/IP Active-Active NS as long as the backend pools and ports are specified correctly.

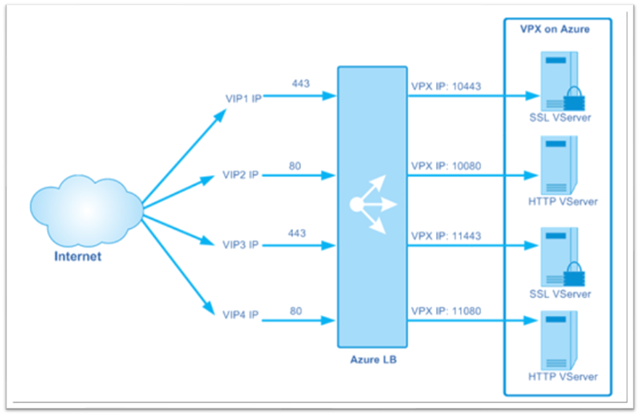

There is a mix up in Citrix documentation on the internal and external architecture pictures referenced with its related technical writeup, so to clarify, if a service (LB or AG or …) is going to be access from the outside using a public IP then an External ALB is required and if a service is going to be accessed from the inside using a private IP then an Internal ALB is required. If a service is required to be access from both outside and inside then the same service will require an external ALB and internal ALB configured independently.

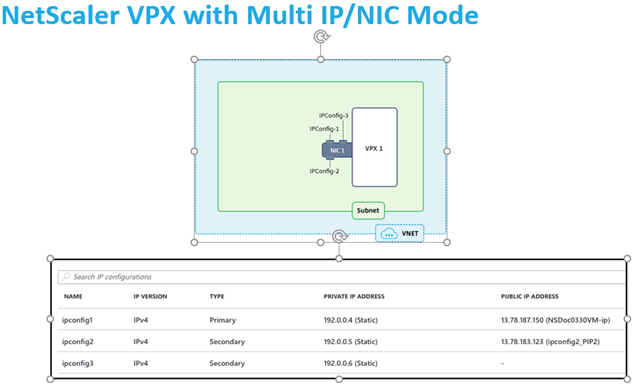

NetScaler VPX Multi NIC/IP Mode

After Azure released official support for Multi NIC/IP support for Azure Virtual Machines, NetScaler would not be limited to single IP mode given that the limitation was not NetScaler related to begin with. Adding multiple IPs on the same NIC can be done directly from GUI and then each IP can be assigned to SNIP and VIPs respectively on NS though always the first primary IP assigned to a NIC is the NetScaler IP NSIP.

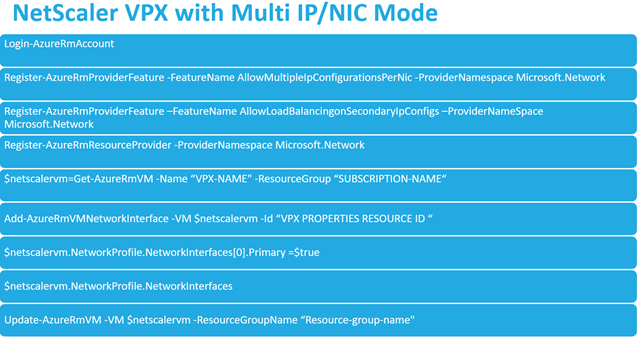

For adding multiple NICs, GUI is not supported specifically for NetScaler VMs so couple of PowerShell commands should be used to add the NIC. After the NIC has been added, IPs can be assigned through Azure GUI same as for primary NIC. For management purposes, the public IP would be assigned on the primary IP since by default it is the NSIP. One note here is that with Multi IP mode (Single NIC) when a port is opened on the NSG, the port would be opened to all IPs associated with that NIC so be careful here when segregation of traffic is required (Multi NIC mode, each NIC has its own NSG).

For Services that require external access, a public IP is assigned directly to the VIP that is hosting the service which would be an secondary IP on the NS NIC, after which the specified port is opened on the NSG to allow access from external users. Note here that because we have multiple IPs, the limitation of well known ports that existed with single IP mode does not apply anymore and services can be created on any well known port. Also remember that you need a Subnet IP for every NIC added.

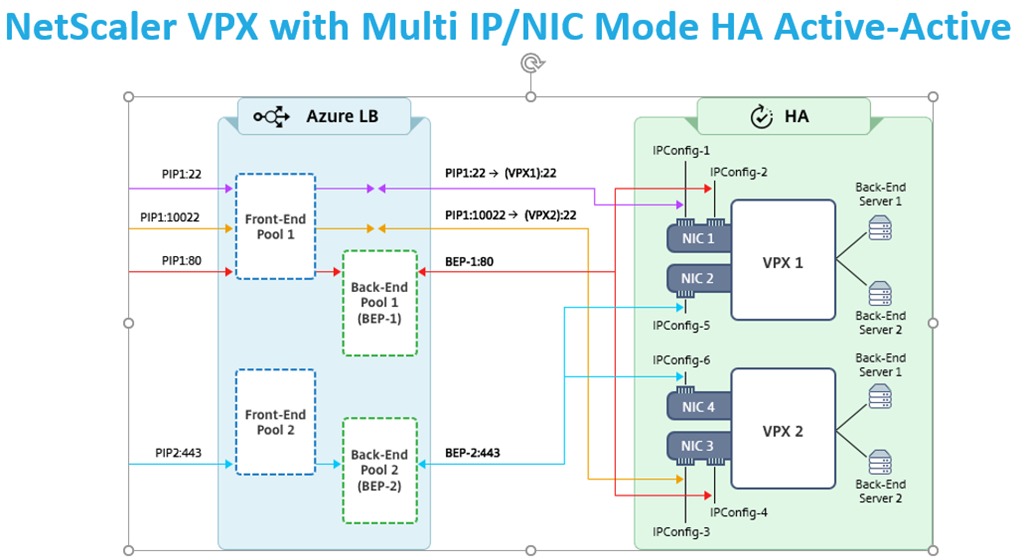

NetScaler VPX Multi NIC/IP HA Active-Active Mode

Active-Active HA for NetScaler VPX Multi NIC/IP is fairly simple as long as Azure Load Balancer Backend Pools are configured accordingly and assigned to the load balancer depending on services required. In this scenario each NetScaler is configured independently with exact same configuration except for different IPs and Azure ALB is used to distribute traffic between NS appliances.

Every service that is going to be hosted on NetScaler will require to be fronted by an Azure Load Balancer that being internal or external resources. Backend Pools will vary because each should connect to the IP that is hosting the service for example an load balancer virtual server. A monitor should also be in place with the port assigned to that service. This is required so that Azure LB fails over a service if its stop working while both NetScaler’s are still functional.

End result would be configure both NetScaler’s with same virtual servers then for each virtual server create an ALB (external or internal depending on type of access) that would front it so users will get the ALB IP/host not the service hosted on NS. I know its a hassle load balancing a load balanced virtual server and creating a specific monitor/backend Pool/Front End Pool/Load Balancing Rule for each.

NetScaler VPX Multi NIC/IP HA Active-Passive

So why do we have an Active-Passive section and another section with Active-Passive (HA Template), well because both work differently and have some varying configuration and requirements. In an legacy Active-Passive deployment, or to make it simpler, an standard on-premises Active-Passive deployment, VIPs vary and SNIPs float between NetScaler’s while on NS is active and another is passive.

On Azure we cannot float IPs not VIPs nor SNIPs assigned to Azure VM NICs so if we would just enable HA Active-Passive on Azure NS out of the box, the configuration would be successful but none of the services when Primary NS is failed over would work because those VIPs ( that were configured on primary NS and synced to secondary NS) do not actually exist on the NIC assigned to the secondary passive NS.

To overcome this we would have to create two virtual servers from every service we want to configure on NS, one with the primary NS VIP and one with the secondary NS VIP then use Azure Load Balancer to load balance those requests with the required Frontend IP/Back end Pools/LB Rules/Monitor. For example, we want to load balance IIS on port 80 , NS1 Primary has a VIP of 10.0.1 and NS2 Secondary has a VIP of 10.0.1.1.

On NS1 which is primary ( syncs the config to NS2 secondary ) we configure two load balancer virtual servers for the SAME service, one with IP 10.0.0.1 and one with IP 10.0.1.1 . What happens is that ALB would forward requests to Primary NS1 virtual server 10.0.0.1 (based on ALB backend pool and monitor) and when it fails and secondary NS2 takes over the 10.0.1.1 virtual server is now taking the requests ( because this IP actually exists on NS2 NIC then it would work ).

In this configuration, DSR is not required when configuring the load balancing rules but still a hassle that two virtual servers need to be created for every service. Also note and don’t forget that NSG needs to be configured on both NetScaler’s no matter if Active-Active or Active-Passive is configured.

To Configure this would require a minimum of 2 NICs on every NetScaler’s and 2 subnets with all related Azure Load Balancer config so the best approach would be to configure the HA template which would create all that then start building those services and do not use DSR on the Azure LB. I don’t know why someone would go down this path anyhow but it is an available option and I have tested this in the same way described.

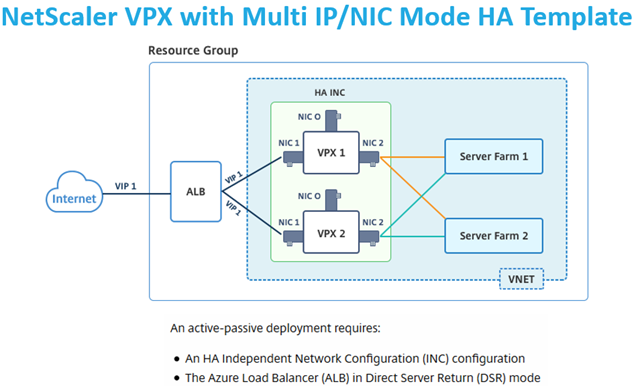

NetScaler VPX Multi NIC/IP HA Active-Passive ( HA Template )

The previous Active-Passive approach required additional effort in creating additional virtual servers on NetScaler and additional IPS for VIPs on Azure NS VM so wasn’t the best solution out there and this lead us to the HA template recently released by Citrix. I would say this approach to HA is the best amongst all discussed and is the recommended way so I am going to showcase the configuration required to establish the same because of Citrix documentation lacking in that area.

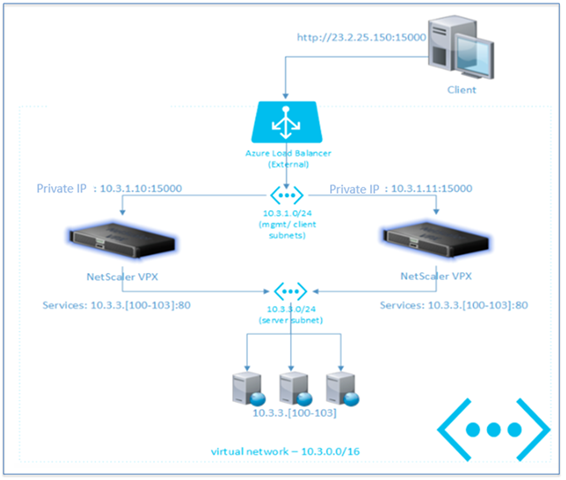

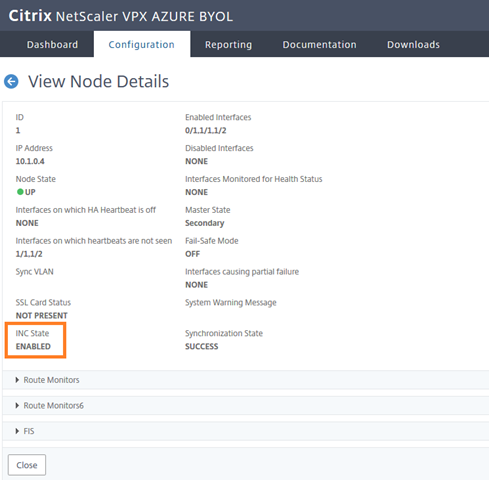

Before we deploy the HA template and test with a load balanced virtual server, lets discuss what does the HA template do and why is it different from previous approaches. The HA template configure two key components that make this all possible which are INC for NS HA and DSR for Azure LB. In a nutshell these 2 options would allow the NetScaler to float the VIP of any virtual server and keep the SNIP static/different for every NS part of the HA.

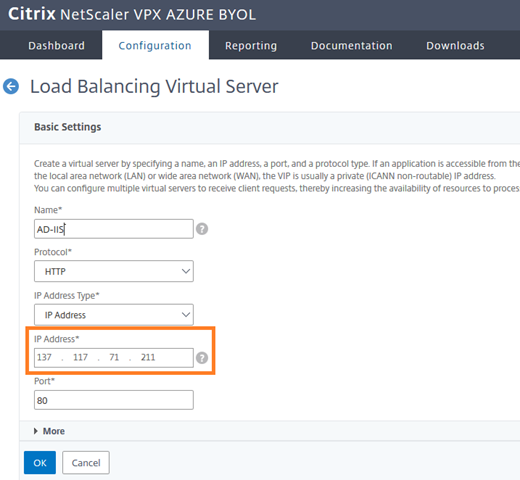

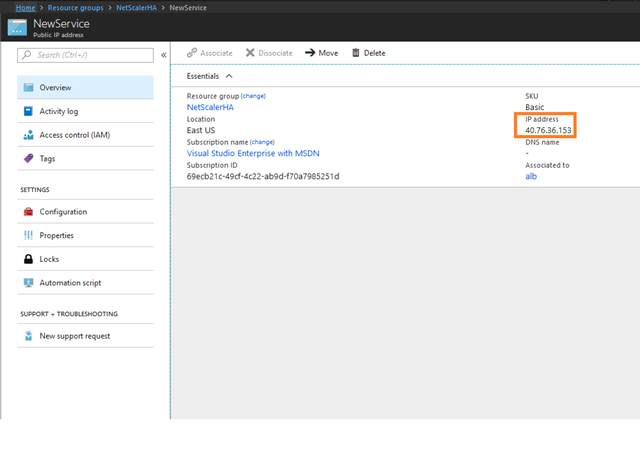

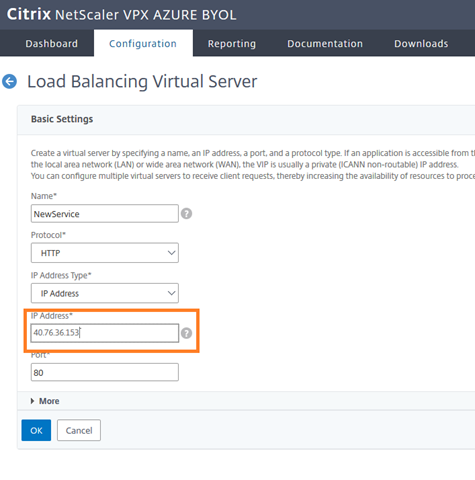

But we established that VIPs cannot float over Azure network and this still applies, what happens here is that the VIP configured in NetScaler is not an IP assigned to the NIC of one of the NetScaler’s but rather the frontend IP of the load balancer that being public or private. To simplify, lets assume we are load balancing an IIS 80 server , this virtual server will require a VIP that is normally assigned to the NS VM NIC then added inside NS, well in this situation NO, the VIP that will be added in NS, is the actual frontend IP of the load balancer ( YES even if public IP ), that is why it is able to float to the secondary NS and keep working ( INC and DSR in action ).

Deploy NetScaler HA Azure Template:

Enough chit chat, time to demonstrate this end to end making sure that we have a functional NS HA with a load balancer virtual server that can be accessed on Primary NetScaler and Secondary NetScaler once failed over without double the resources or manual intervention:

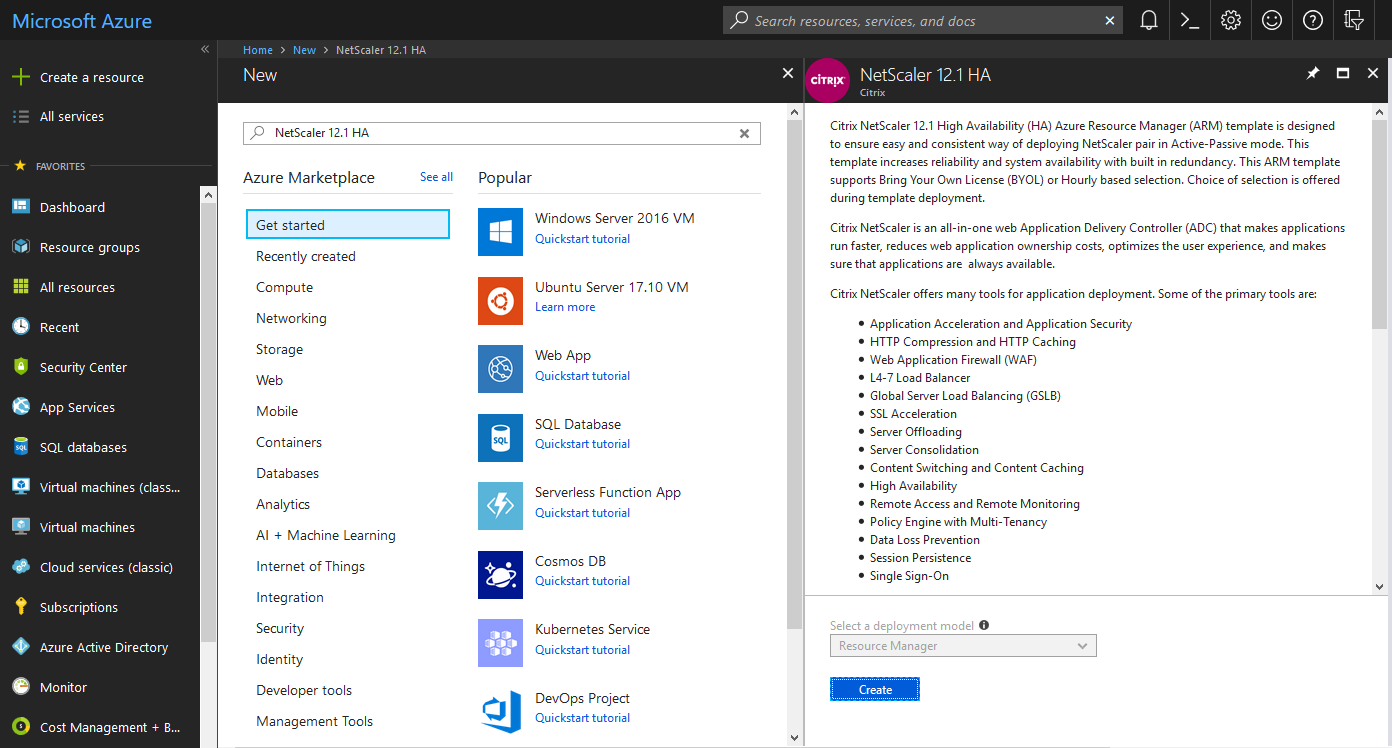

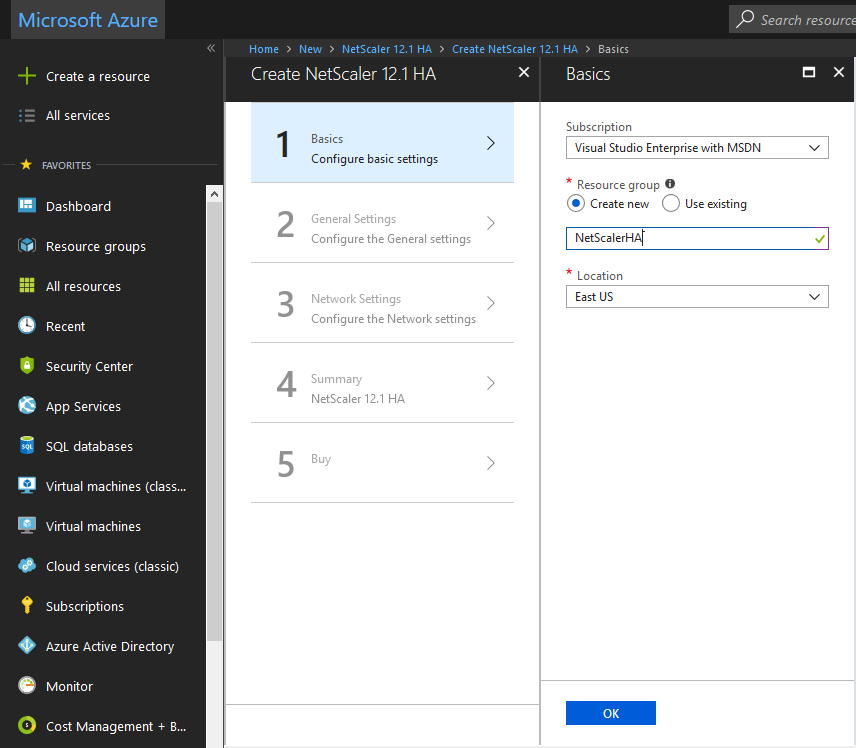

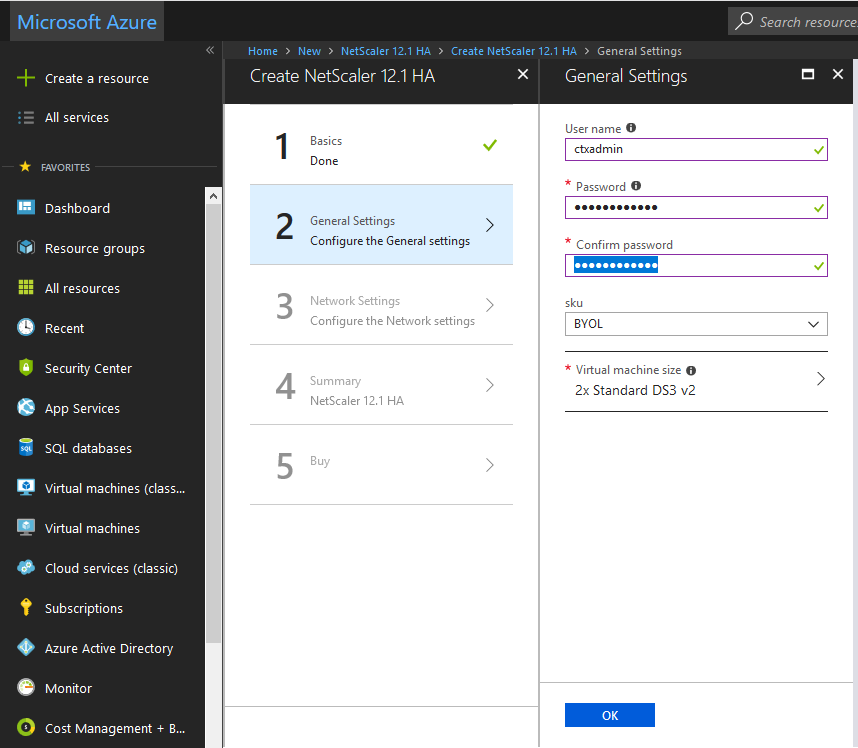

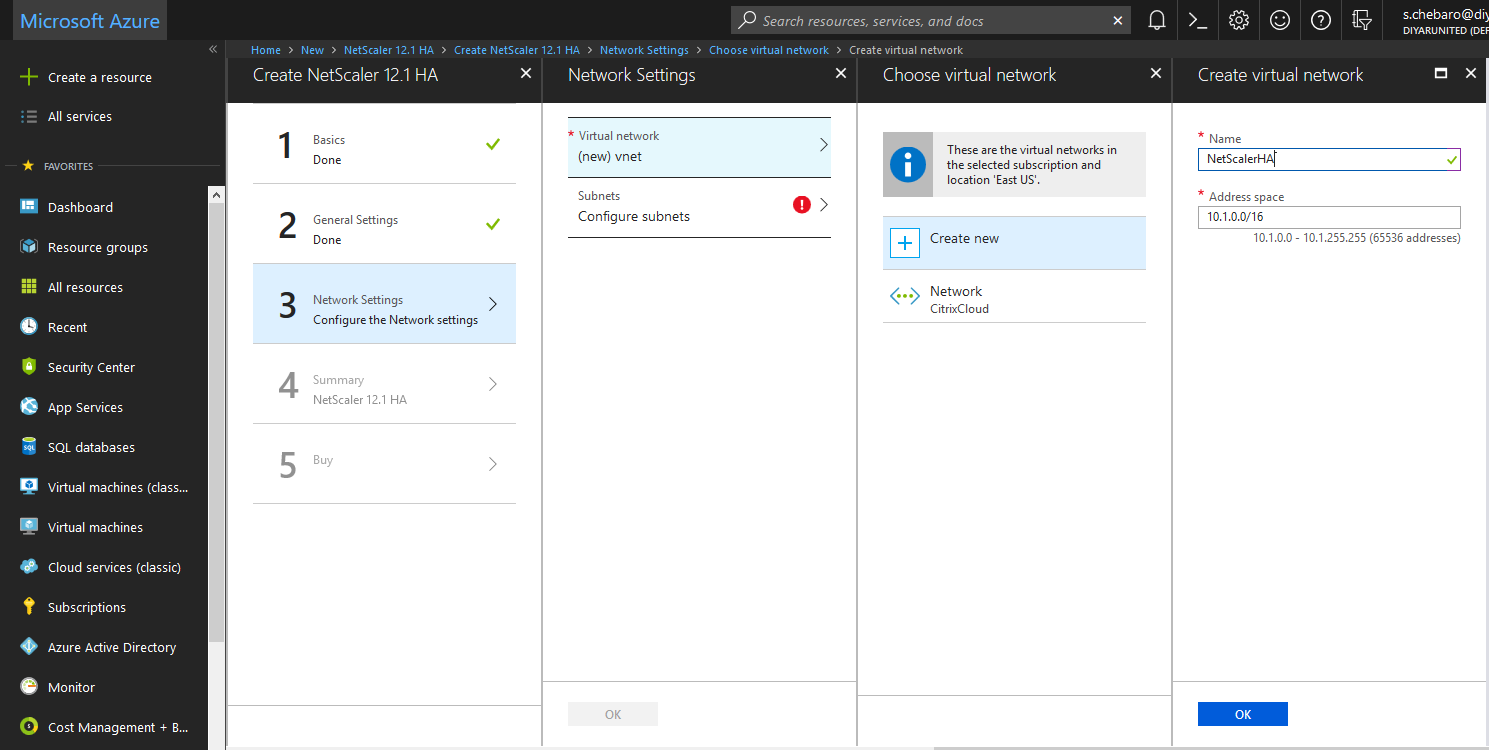

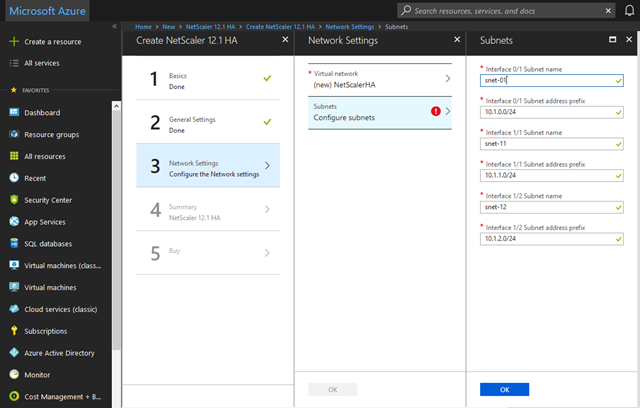

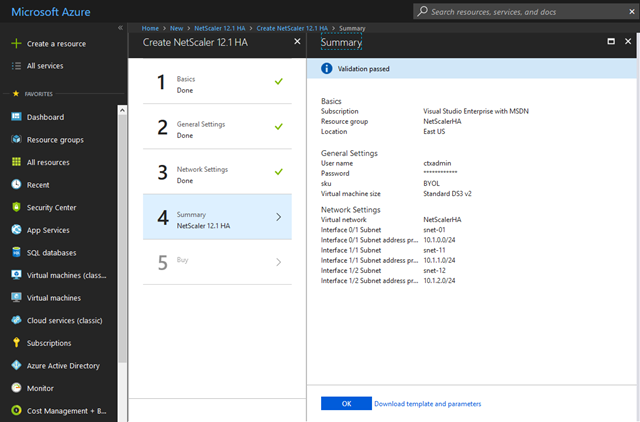

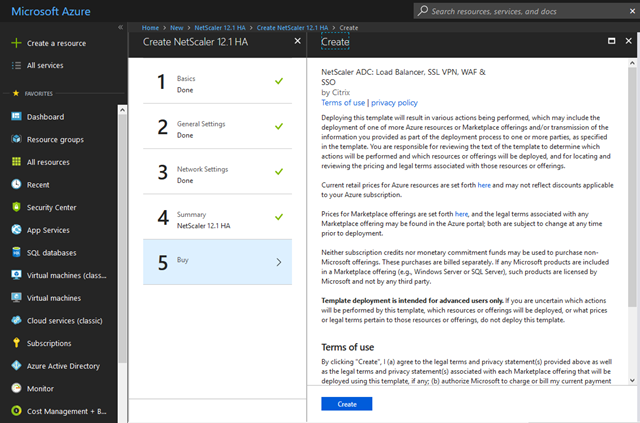

Deploy the NetScaler HA 12.1 Template from Microsoft Azure:

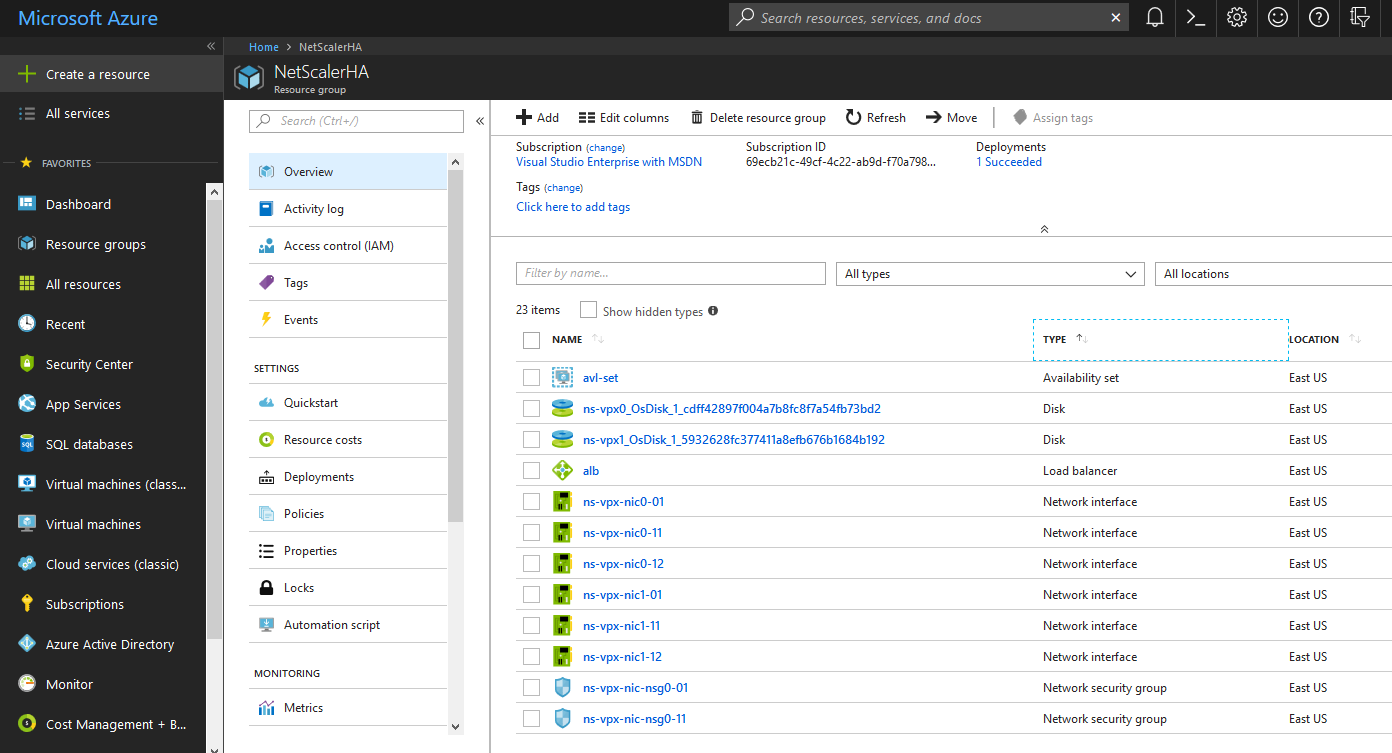

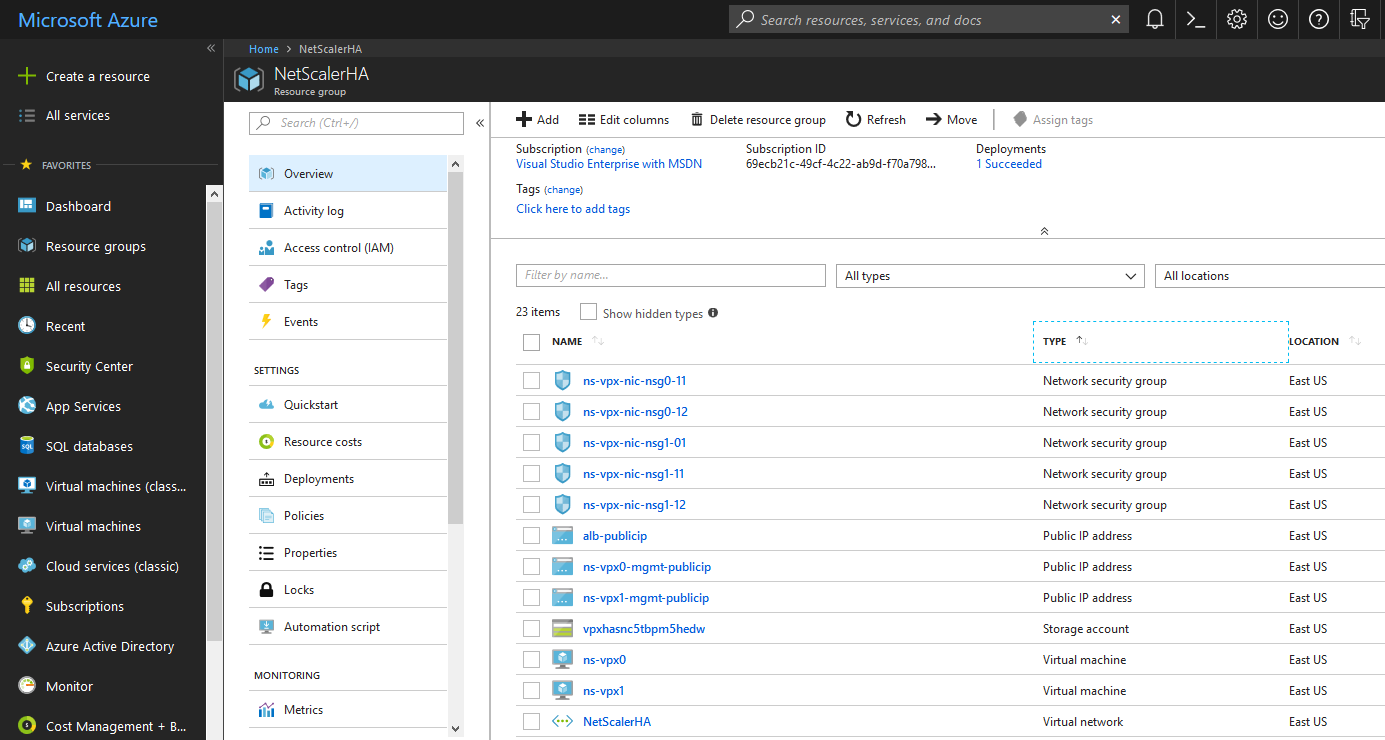

Three NICs and Three Subnets are required for this type of deployment. Existing vNET and subnets can be used but I have opted for new ones for the purpose of this demonstration.

These are the resources configured automatically when the HA template is deployed.

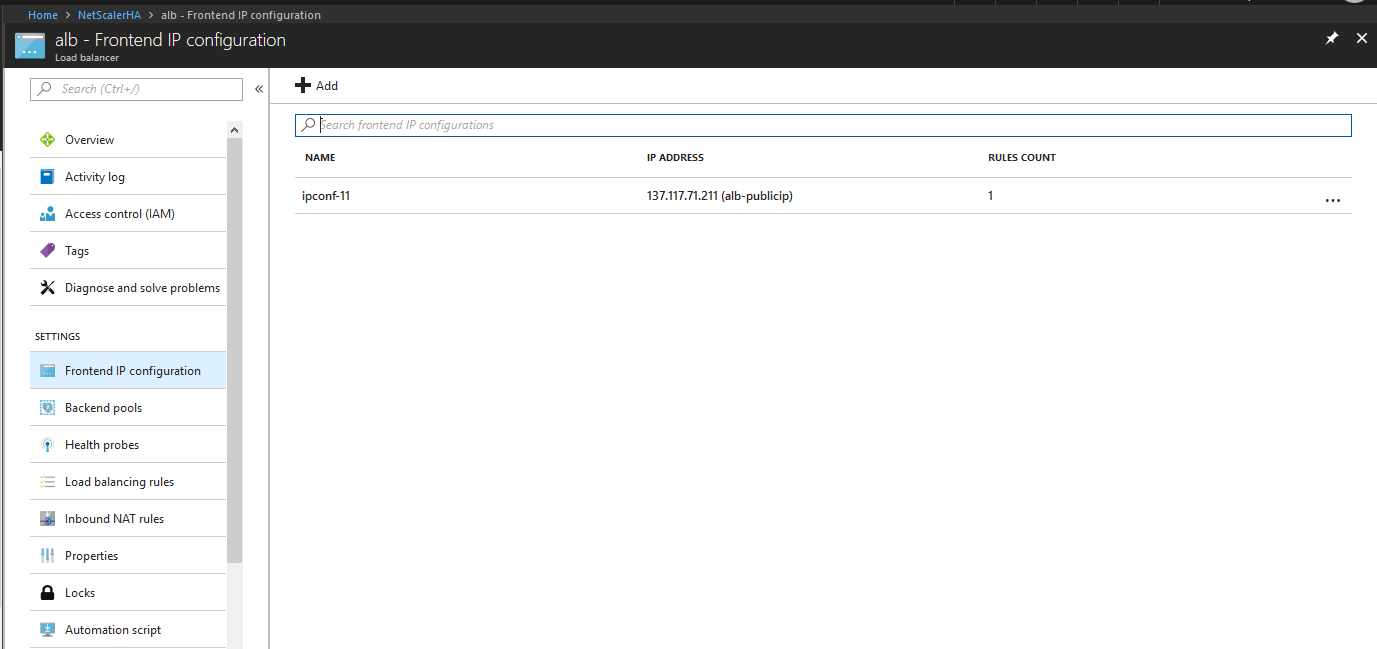

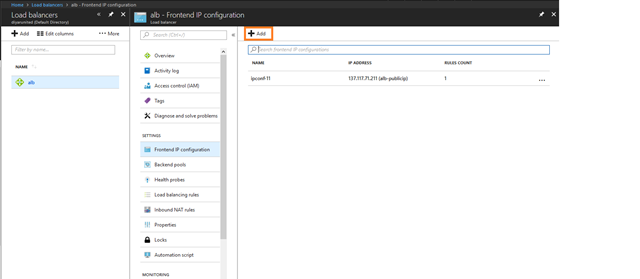

By default, one public Frontend IP is added, which can be used for any service that will be hosted and publicly accessible from NetScaler. Every service with the same port will require a different Frontend IP that will later be added as the service VIP on NetScaler.

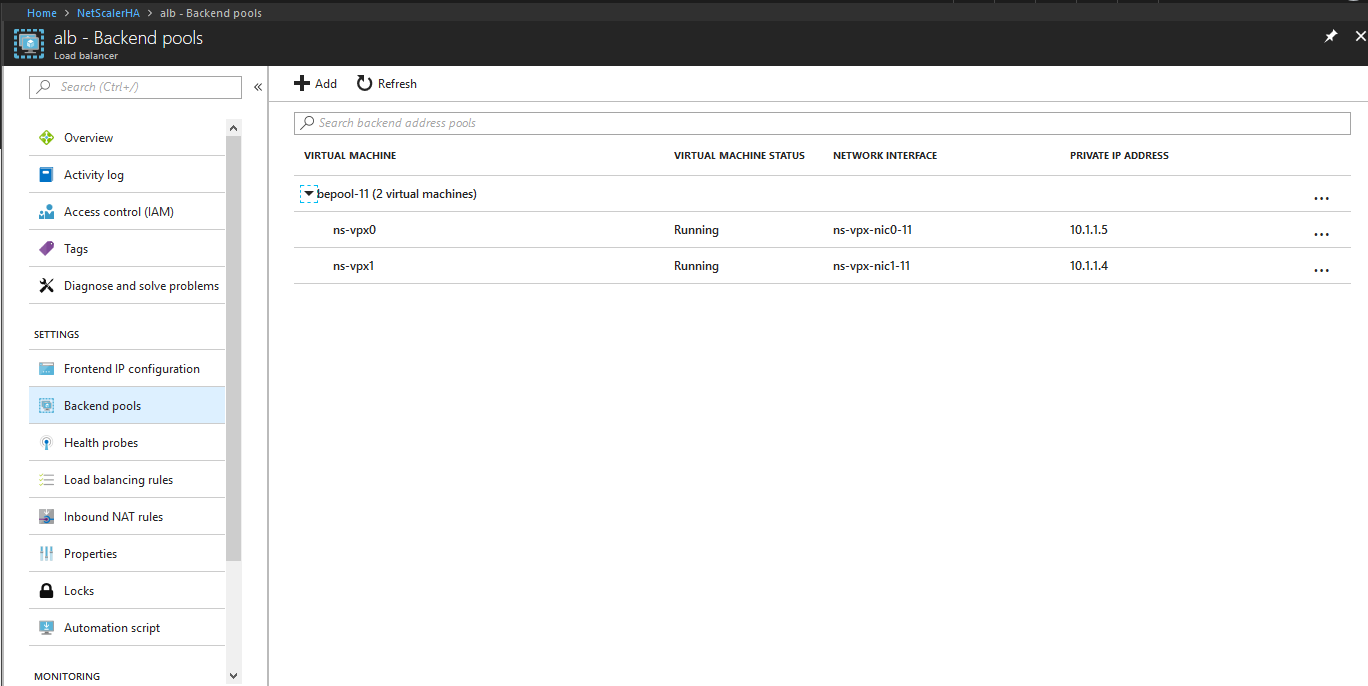

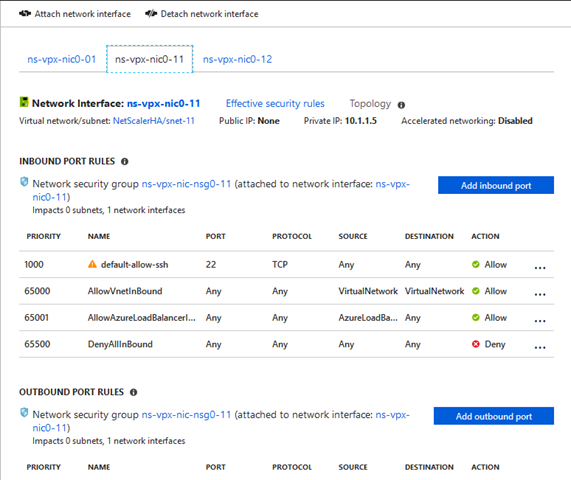

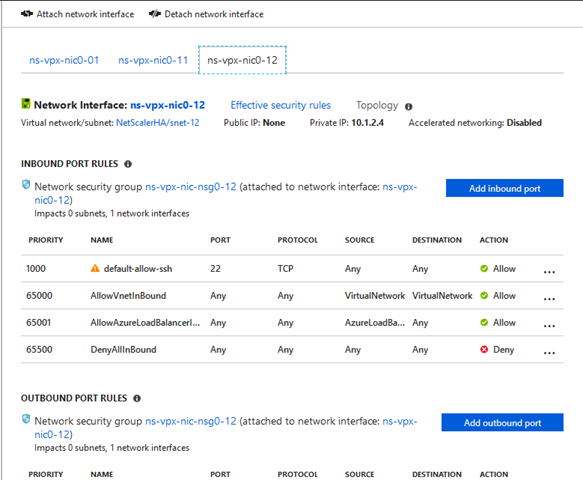

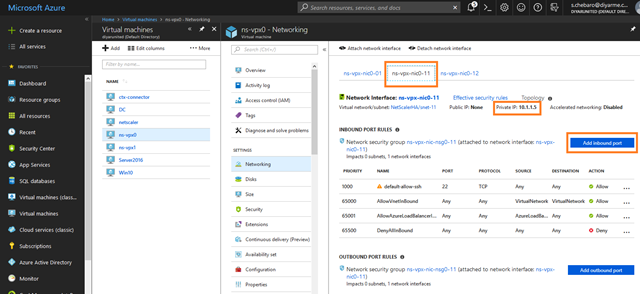

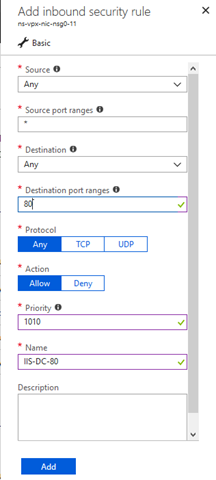

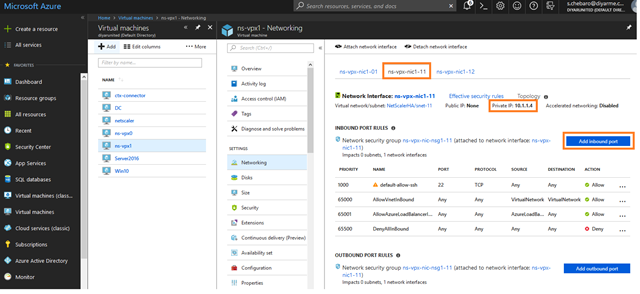

Azure Load Balancer for NetScaler will communicate with the following SNIPs assigned on each NS to determine which NS is primary and active. The reason you need to note this is that later on, any service to be published, the port will need to be opened on the NSGs assigned to the NICs hosting those IP addresses.

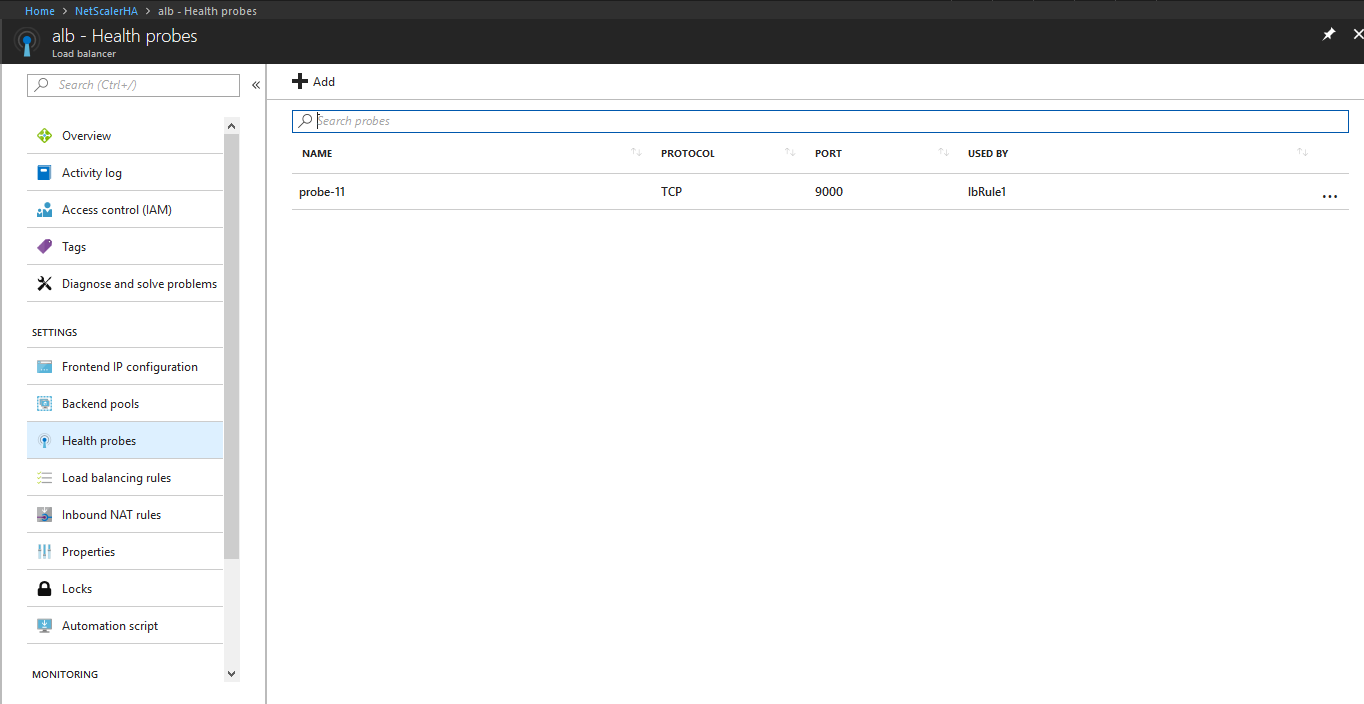

Azure Load Balancer will use TCP 9000 to communicate with both NetScaler’s and determine the status of each in order to load balance requests accordingly.

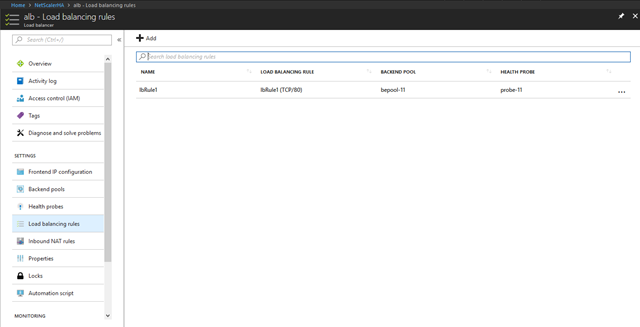

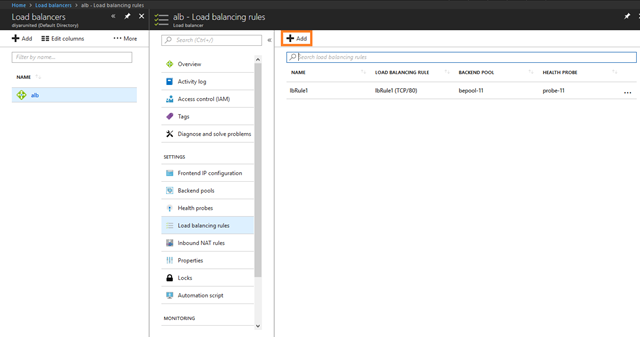

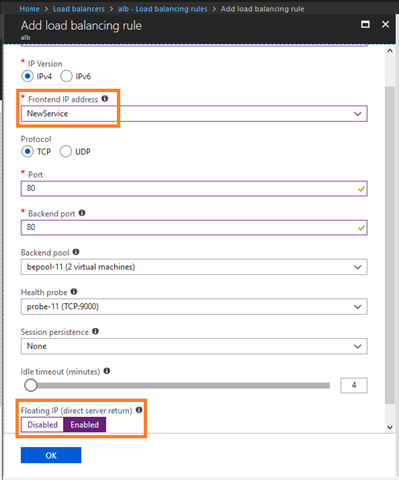

This is the load balancing rule that determines the public IP, port, and service that is going to be accessible and load balanced. Note that each future service will need a new load balancing rule and make sure that DSR is enabled.

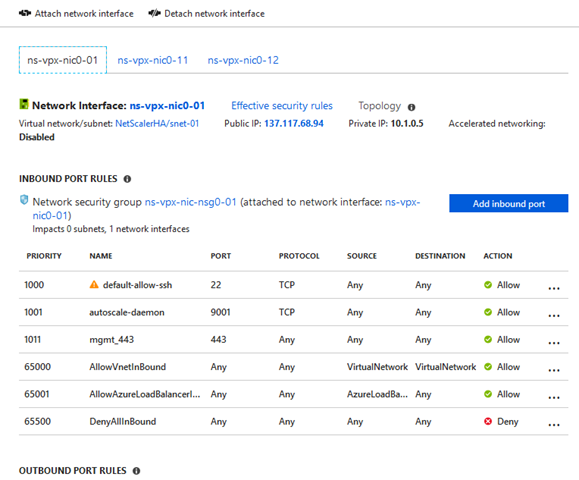

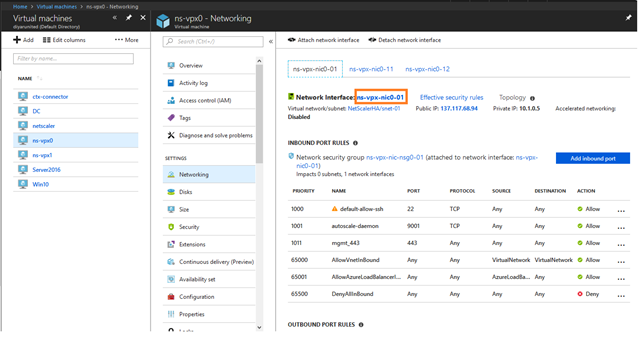

Every NS VM will have three NICs attached, each on a different subnet. The first NIC is the NSIP (mgmt.) and the other two, each will have a SNIP from its subnet configured in NetScaler. The second NIC is the one that Azure ALB is using to determine health and status of services and third can be used for backend services.

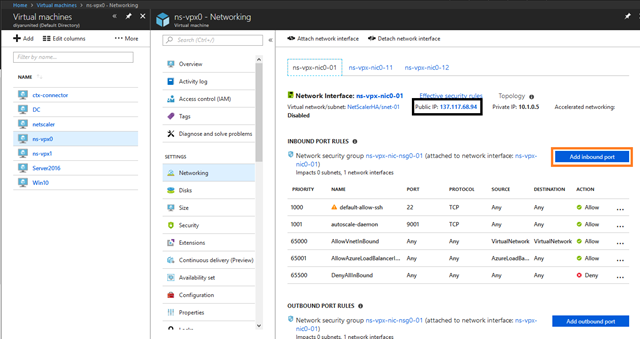

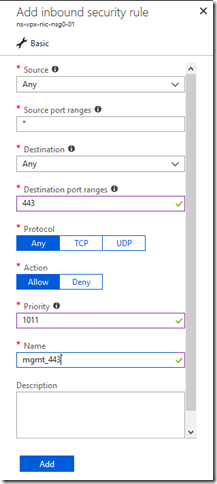

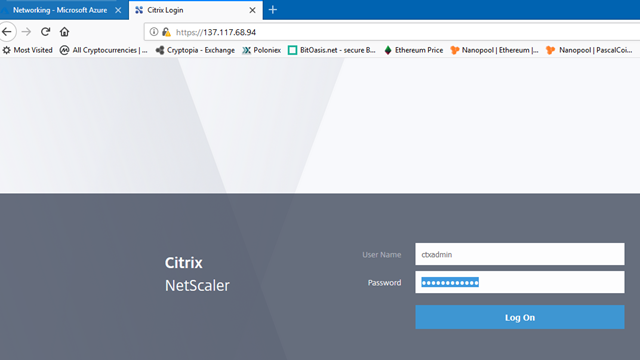

If you have a bastion host to connect to your Azure environment and use that for management then you don’t need to open 443 for the public IP assigned to mgmt. NIC in order to gain GUI access to NetScaler. If not, we need to open the port to manage NetScaler accordingly. These public IPs are only for mgmt. and can be removed with no impact to NS config.

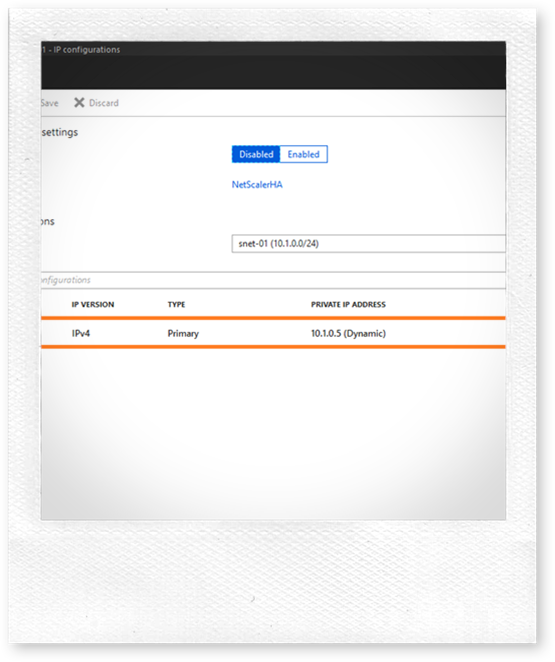

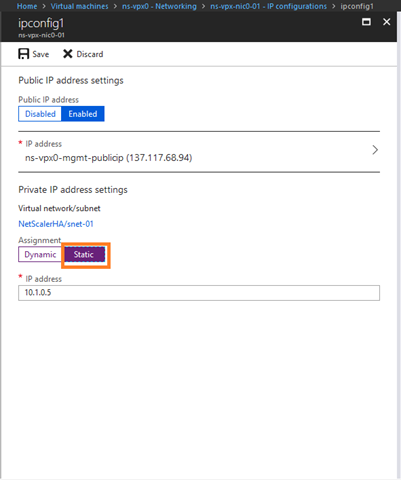

On all NetScaler NICs, that being both NS that have a total of 6 NICs, change the IPs to static or else you might lose your IP when you restart the VM.

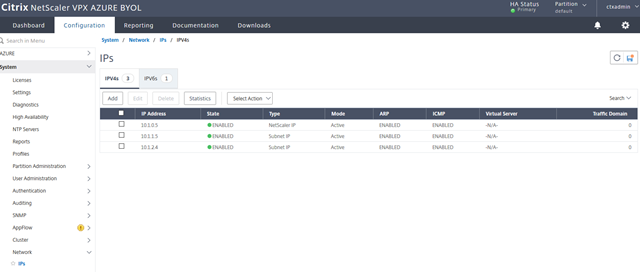

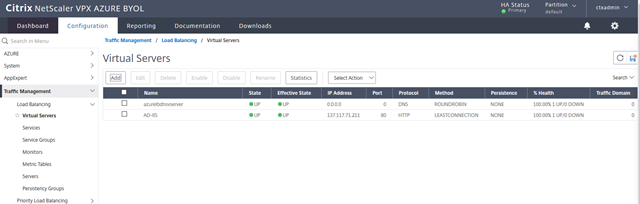

Now that I have opened mgmt. port 443 for public IP assigned to NS, lets login and double check existing configuration and deploy a virtual server that will load balance my IIS server on port 80 to test NS HA:

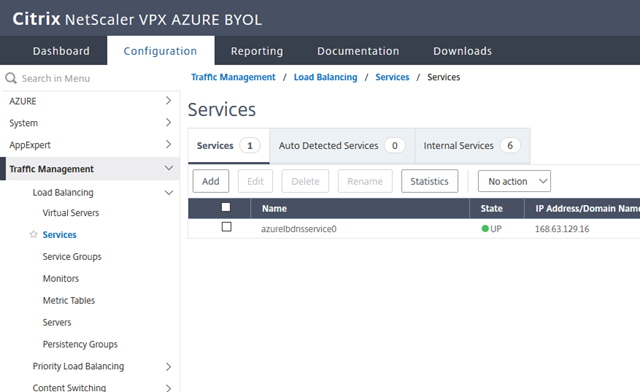

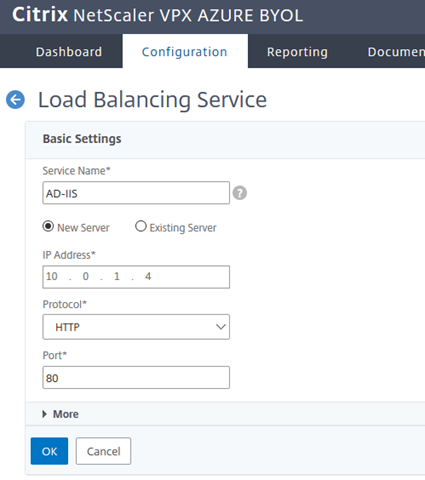

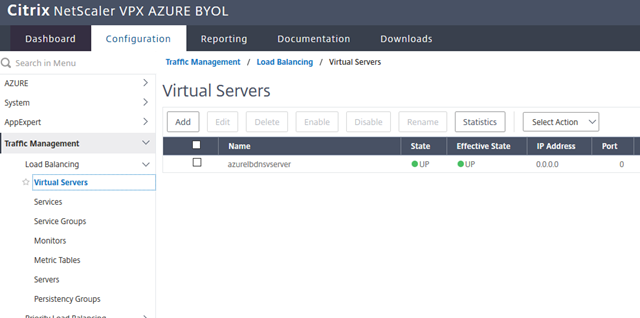

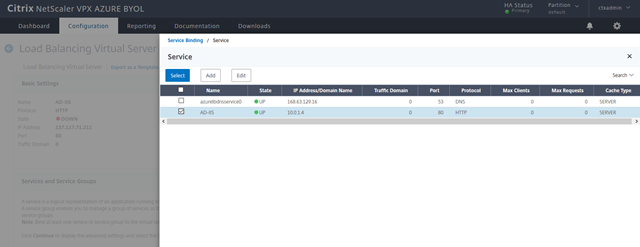

For testing purposes, I will add a service that points to my IIS service on port 80 and add a virtual server to load balance this service.

I am using the Frontend IP created by default with the HA template that has this IP address, I will add that directly on NetScaler. Every new service will require a new frontend IP as detailed below as long as the new service port is the same or else the same can be used but a new load balancer rule will be required under any case.

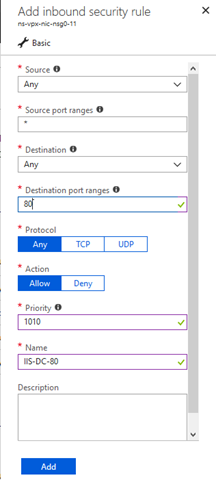

The port required to be published should be opened on the 2nd NIC attached to all NetScaler’s because those are the NICs that Azure ALB is trying to reach.

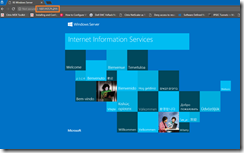

Accessing Frontend IP on port 80 is now successful and based on load balancing rule that was created by default using the HA template for port 80 and that specific frontend IP, we are able to access the service hosted on NetScaler.

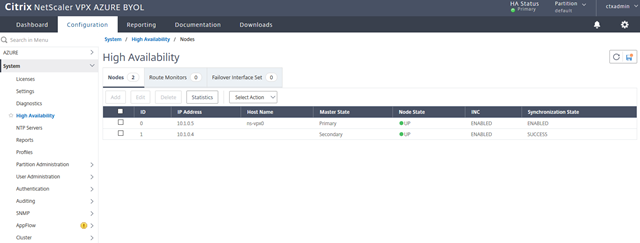

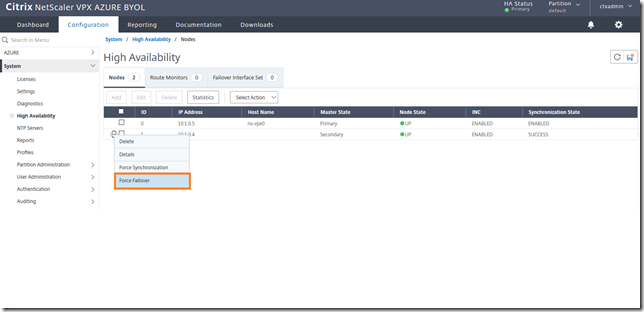

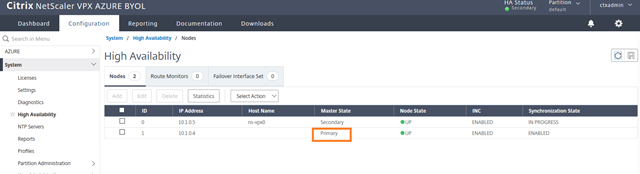

Failover Primary NetScaler to Secondary NetScaler and test again to make sure that the assigned frontend IP has floated to the 2nd NetScaler and service is still accessible on the same public IP.

For every publicly accessible virtual server to be hosted on NetScaler, the following needs to be created, if an internal accessible virtual server is required then just create an internal load balancer and do the same (only difference is the ALB IP is private not public):

Conclusion:

The HA template approach with INC and DSR is the optimal way of doing Active-Passive HA for NetScaler on Microsoft Azure. Let me know if the above is not clear or any help is required for the same in the comments below.

Salam 🙂 .

Very good explanation. Thanks a lot

Thank you.

Thank you so much for the details but am still stuck.

Could you mail me please, So I could explain the issue?

Send me a message through the contact us form with your issue and I will look into it. Thanks.

This is outstanding work, many thanks! Really fills in the missing links to understand what’s going on in between Azure and the NetScalers.

Thank you Ralph .

Excellent Valuable Information

Thank you .

Nice job, but wow my Netscaler work just tripled. So we add more nics for each Snip? And for multiple VIP (vservers) it needs it’s own ALB (pip)?

ALB, at least at the time of posting was a requirement because if you created an LB VIP on one of the Netscalers, how would it failover to the 2nd one when the VIP on the LB is directly attached to the NIC of the first one and cannot float. For the additional NICS, I don’t remember needing additional NICS for each snip but then again I have not reviewed this since its posting. Let me know how it goes with you deployment and maybe we can have a look together, I need to refresh my information on this.