Introduction:

In a recent engagement in which one of my customers had a Citrix XenApp and XenDesktop Cloud Service deployment spanning multiple Microsoft Azure regions all of which are active, issues with resource aggregation and corrupt profiles started to immerge due to the nature of how Active Active sites work when geographically dispersed.

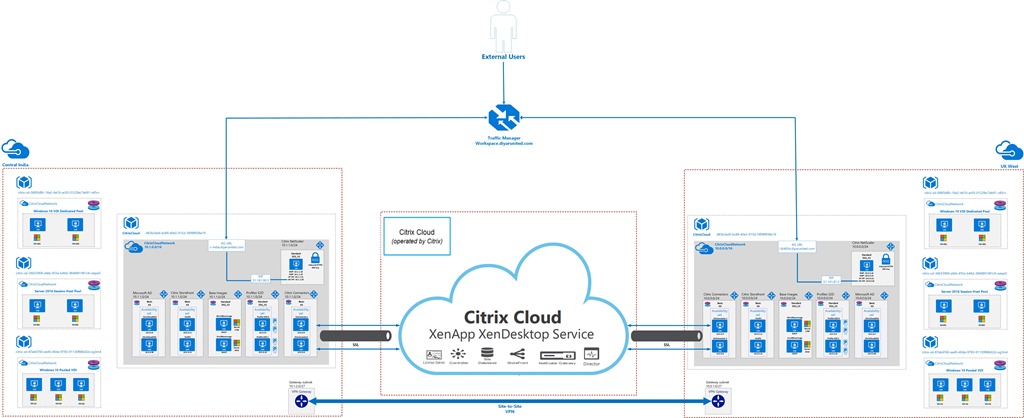

Architecture:

In the below referenced architecture, we have an highly available VDI environment configured in each region with both connected using Azure Site-to-Site VPN Gateway between Central India and West UK. Azure Traffic Manager is utilized to direct user traffic based on proximity thus ensuring that users are always redirected to the nearest region. The actual architecture has more than 5 regions but only 2 are depicted below for the sake of this post.

Components in each Azure region are compromised of the following:

2 x Storefront Servers (Availability Set)

1 x NetScaler (I prefer one over available NS Azure HA options)

2x Microsoft Domain Controllers (Availability Set)

2x Microsoft DFS-R (Availability Set for Profiles/Data Repository)

2 x Citrix Cloud Connectors (Availability Set)

1 x Win10 Base Image

1 x Server2016 Base Image

3 x Virtual Desktop Catalogs in each region

Azure Traffic Manager

Azure VPN Gateway (Now that it is GA for mostly all regions, Use Global vNET Peering !)

I am not going to go over a detailed configuration conducted to establish a working Active Active Cloud environment but will touch base on issues faced because of unsupported DFS-R configuration for profiles and data which is very well known and on resource aggregation with Citrix Cloud and local Storefront servers.

Multi-Site Resource Aggregation:

Optimal HDX Routing and Storefront icon aggregation are great ways of making sure that users do not get multiple icons for the same resource published in different zones and that resources are opened from their local site rather than traversing WAN. The issue we had here was that we actually wanted some resources to be visible in some sites for all assigned users even when they match such as same virtual desktop or application name and other resources to be hidden for specific sites depending on which region the user is forwarded by Azure Traffic Manager.

Citrix Cloud XenApp and XenDesktop Service uses zones to logically segregate connectors, domains, and resources but does not do so for user assignments when using Library. Assuming I have user1 with access to multiple resources with the same name in different zones, all of these resources are visible to the user when logged in into any Storefront and Access Gateway for that whole environment regardless of the region you are hitting from Traffic Manager.

Simply enough, we wanted users connecting to Central India to see all available resources from all regions while users connecting to West UK to only see resources aggregated in West UK zone, of-course given that users have been granted access for both from Library. Since local Storefronts are being used, the best way to achieve this granularity was by using Delivery Group/Applications KEYWORDS and StoreFront KEYWORDS.

I had my doubts at first if Library offering would work with delivery group KEYWORDS but they actually did work using an on-premises storefront. Would this work when using Cloud hosted Storefront !? I tried to load the XA and XD SDK to give it a shot but those do not have the Storefront cmdlets loaded into them so it wasn’t possible to change store settings. Could you open a case with Citrix for the same !? I will try and let you know but don’t think so …

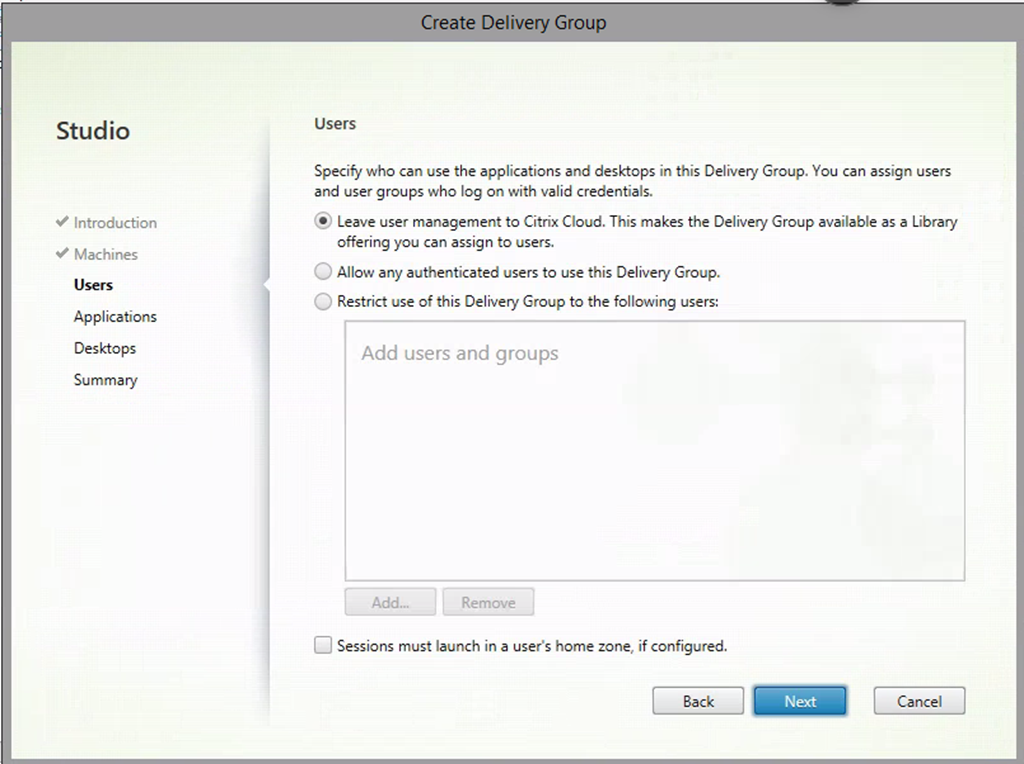

1- Make sure that access is provided through Library offerings or you can use delivery group assignments, both work fine:

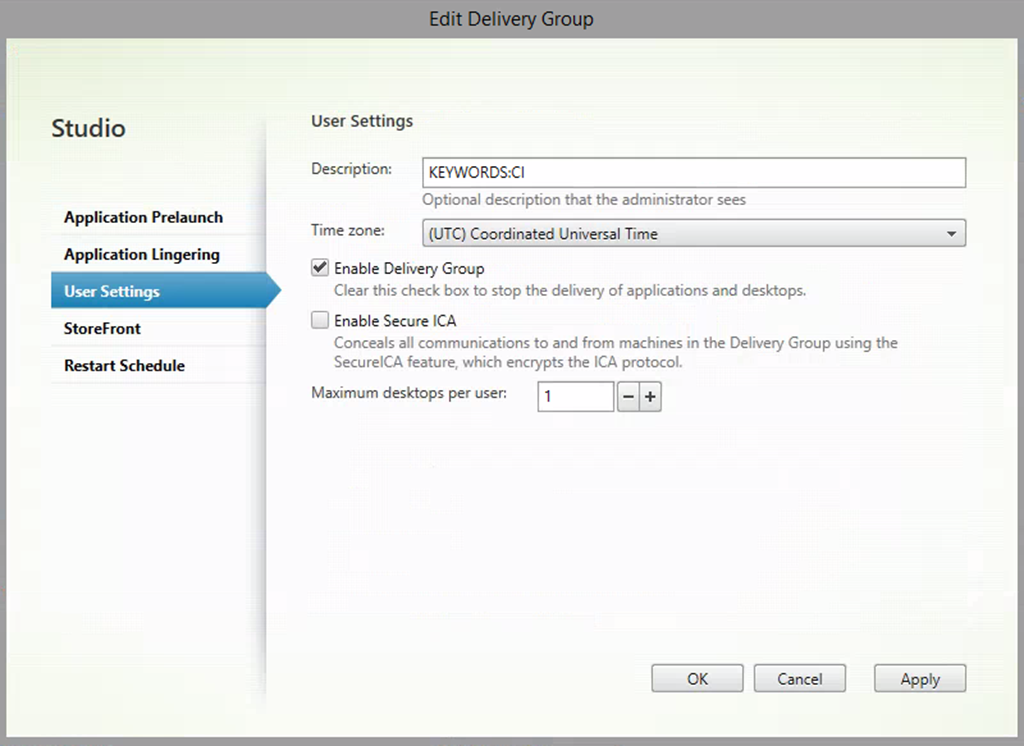

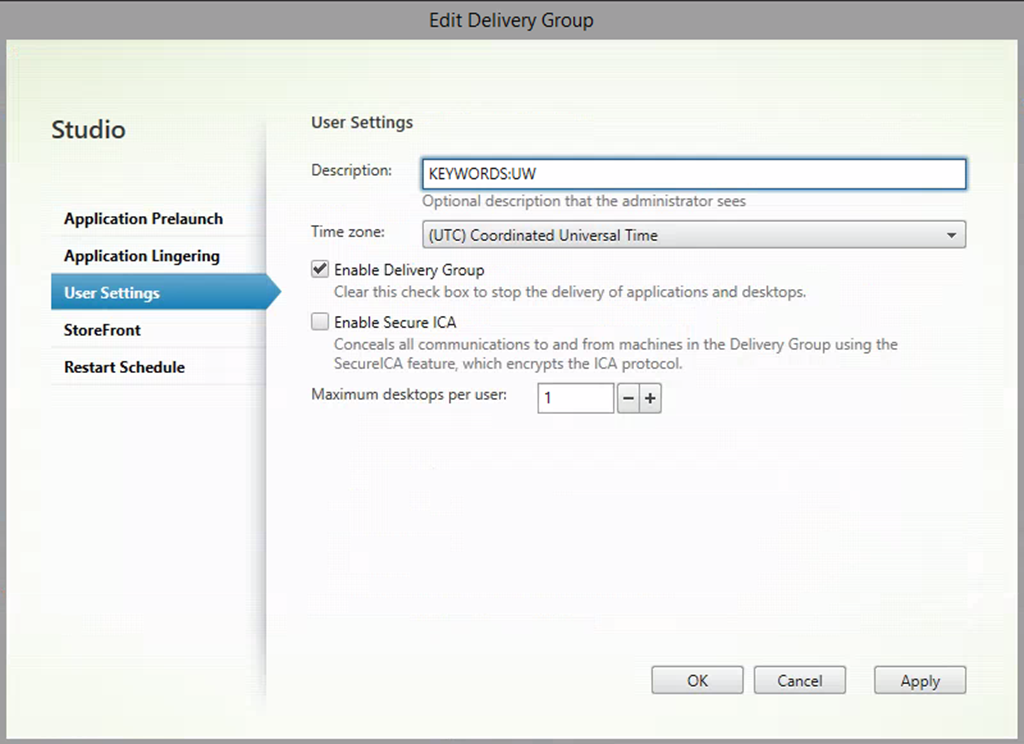

2- In order to configure storefront in each site to aggregate resources from specific delivery groups, we first need to assign a keyword for every delivery group to distinguish it. Multiple keywords can be added by just separating with a space, I do that based on Azure regions, so CI is Central India and UW is UK West:

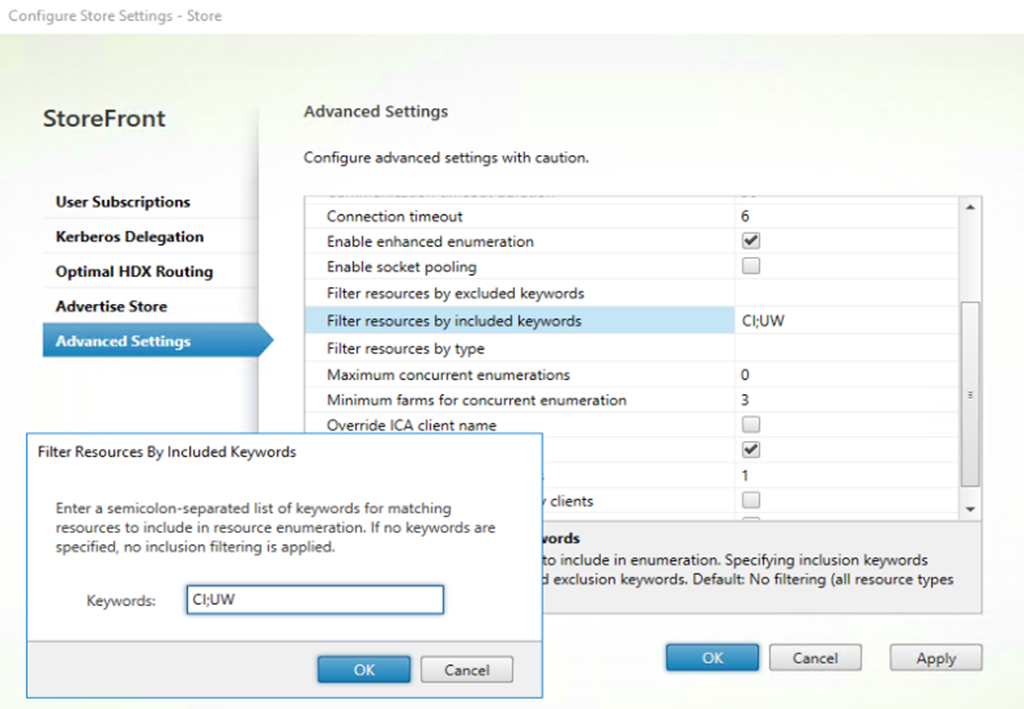

3- Simply enough, because we want users forwarded by Azure traffic manager to Central India to have access to both resources published in Central India and UK West, we add both keywords in Central India Storefront store advanced settings separated by a semicolon:

When users are forwarded to Central India Access Gateway and eventually Central India Storefront server, it will search for resources (delivery groups, desktops, and applications) that have CI and WU keywords to display, any other resource will not be displayed since we used the Filter Resources By Included Keywords.

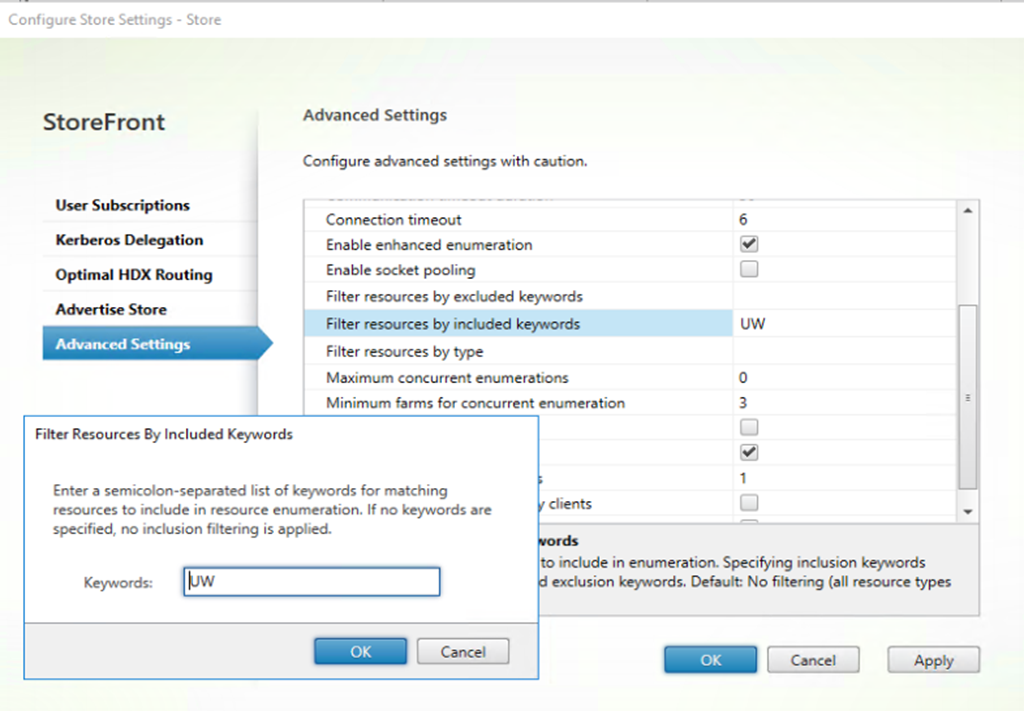

On the UK West Storefront server, we are only going to add the WU keyword so only delivery groups, desktops, and/or applications with that keyword will be displayed:

Bottom line here is that users logging into Central India will be able to see resources from Central India and UK West while users logging into UK West will only be able to see resources from UK West. This is based on where Azure Traffic Manager forwarders users when they connect based on proximity.

Profiles, DFS-R, Azure, and Active-Active … Say What !!!

Lets start by stating that profiles replication in whatever manner it is configured does not specifically relate to whether its done on the cloud or on-premises as long as the same underlying technology is being used which is most probably DFS-R or NAS replication. Because we are limited ( Liquidware Labs ProfileUnity and FSLogix are changing this now with supporting Azure blob … ) at least when using UPM and Folder Redirection to SMB CIFS shares, DFS-R makes the most sense especially on the cloud.

I wont repeat the whole concept of why an active active replication of profiles are not supported by Microsoft nor by Citrix because I have already detailed it here so kindly read into the links below before continuing:

Citrix XenDesktop 7 VDI Active-Active/Passive Multi-Site Disaster Recovery Part 1

Citrix XenDesktop 7 VDI Active-Active/Passive Multi-Site Disaster Recovery Part 2

Now with the recent support of Microsoft Azure Global vNet Peering for mostly all Azure regions except China and government, I propose a solution that would solve this once and for all but as of now I have NOT received word from Microsoft if its a supported configuration, check it out here:

That been said, the only way I was able to tackle such a solution where active active VDI sites where used extensively with users changing regions frequently is by using DFS-R for replication and segregating virtual desktops using OUs and GPs based on region while pointing users to the local share of each site depending on which Azure region they are logging on into. This is detailed in the post mentioned above Part 1.

The downside when using on-premises was that first when the profiles/data storage repository local to that site was down, either the path has to be changed manually for users logging in to that site or they should be forwarded to a different site. With Cloud its much more stable because 99.X % availability is much much better than what any customer would have on-premises and those file servers where less prone to hardware failure or other downtime requirements.

Second, because of WAN connectivity bandwidth and latency limitations, we had to assume that when file services are down in a specific region, the whole site is down since we don’t connect to the namespace, and users need to be forwarded to another site so that they don’t traverse the WAN for profiles and data which would need manual intervention. With Cloud and Global vNet Peering, this is not an issue anymore especially if both regions are within the same boundary. My testing has shown that latency between UK West and UK East was 4ms which is to some extent what any L2 network would have which is great.

Update: I received couple of questions on why an S2D Failover Cluster with DFS-R needs to be used in this active-active scenario instead of pure DSF-R which conceptually is enough !?

Because we point to the local share that is replicated by DFS-R in each region and not to the DFS-R namespace, we can only point to one file share location in that region so that sole file server hosting the share would become a single point of failure. Using an S2D Failover Cluster with DFS-R on top would allow us to point to the clustered share path in each region which is pointing to two file servers rather than one thus mitigating the single point of failure.

Configure Citrix Cloud User Profiles on Microsoft Azure Storage Spaces Direct

How you choose to tackle this subject is always a dilemma because of the thin line of something that is configured in a way that mitigates the reason vendors don’t support it versus the actual support statement of vendors and if they would actually support it … I have seen that Citrix and Microsoft are most often than not, hesitant to tackle configuration questions related to profile replication and access when it comes to multi site deployments for obvious reasons, its too risky for them … !

Conclusion:

It’s inevitable that we move to the cloud because of the opportunities it opens up for solving challenges that has haunted on-premises deployments for some time now. How and When we do it, would be dictated in my opinion by the magnitude of the challenges currently being faced. If you look at the awesome VDI like a Pro State of EUC 2018 bits and peace’s released as of now, DaaS is amongst the highest in EUC initiatives, and Migrate to the Cloud is amongst the biggest challenges for on-premises environments. I see lots of potential in the years to come … !

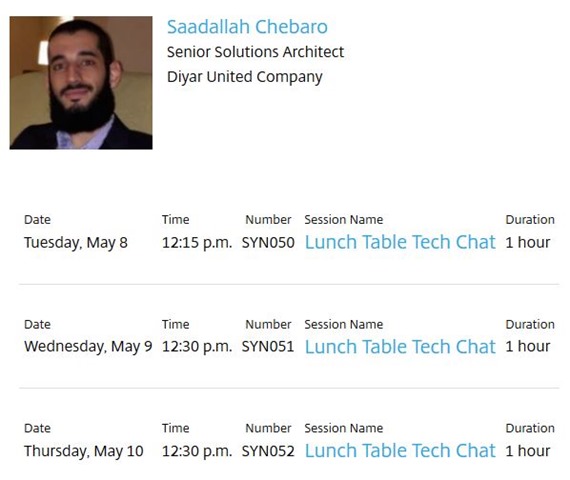

Coming to Citrix Synergy 2018 !? Interested in Citrix Cloud Service Offerings !? I will be hosting a lunch table tech chat on Citrix Cloud Services, make sure you drop by:

Salam ![]() .

.