VMware Horizon 7 VDI Active-Active/Passive Multi-Site Disaster Recovery Part 2

VMware Horizon 7 Multi-Site with Microsoft Azure GSLB Traffic Manager

Introduction:

Finally VDI “Virtual Desktop Infrastructure” has taken off as it should have years ago making 2017 undisputedly the year of VDI. The recent and rapid advancements in Technology (HCI, Cloud, Graphics, SDN, Endpoints, Automation, Security …), Pricing, and Workspace is now mature enough placing VDI in the frontier of business/IT demand.

VDI deployments are no walk in the park, so many components are involved in an VDI environment that everything has to be correctly in-place for a successful result yet post-deployment operations advantages do outweigh the hectic job required at pre-deployment so planning is of the essence.

That been said “With great power comes great responsibility” , giving your users the luxury of Anywhere, Anytime, and Any Device workspace then taking it away for any reason that being a catastrophe or a minor Datacenter glitch is not an option in the current world we live in and the workspace environment we have come accustomed to.

This is where Multi-Site Active-Active or Active-Passive Disaster Recovery VDI infrastructure design and deploy comes to play. Initial design planning for VDI availability between sites/regions should be well though off as Virtualization, Storage, Networking, and Security components tie together with a very specific configuration to provide an highly available environment with minimal downtime if none.

Scenario:

Multi-Site High availability for VDI comes in different forms and flavors as it depends on many variables especially networking. I have a specific scenario though I did work on different ones never the less it should be a good starting point for an availability strategy based on different environments.

First things First , the scenario I am going to touch base on and deploy is NOT supported by Microsoft in regards to profile/data folder replication handling, the reason being DFS-Replication is not capable of handling simultaneous writes to a replicated folder on both ends of the namespace thus causing sync/replication issues. Because I am looking to configure an true active-active scenario, the trick here is to make sure that users are not able to open/write data from 2 different sites at the same time and so we shall.

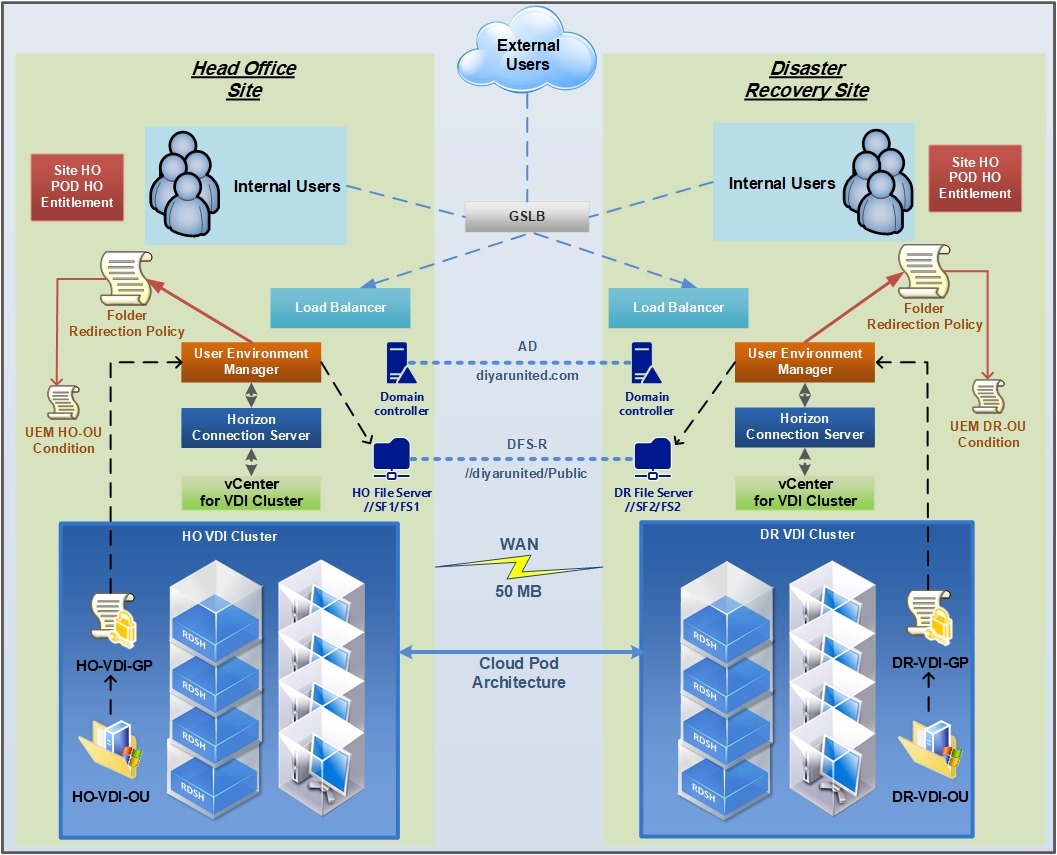

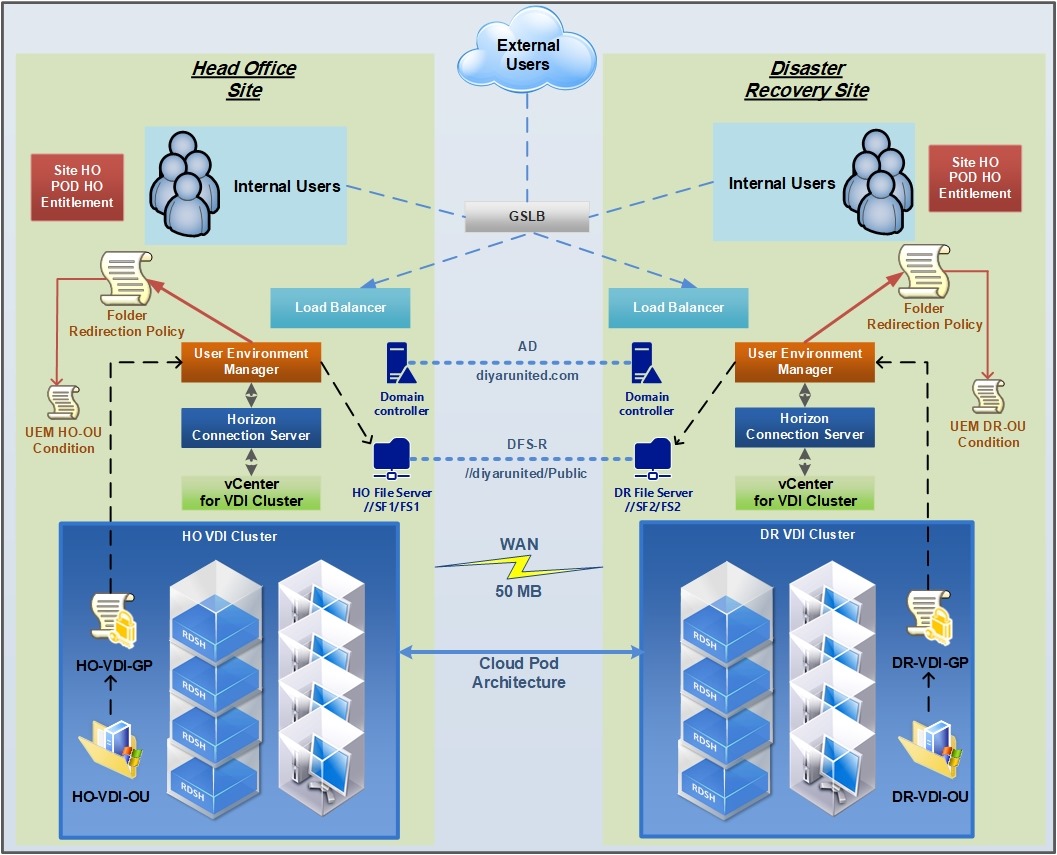

From an infrastructure perspective Both sites are part of the same domain name structure with each site having couple of GC domain controllers. An Microsoft DFS-R “ Distributed File System-Replication “ namespace is configured for both sites utilizing one file server cluster in Head Office Site with 2 file servers and one file server cluster in Disaster Recovery Site with 2 file servers. Each site has an DHCP server and an independent vCenter/vSphere deployment.

We have 2 independent sites connected with an IPSEC tunnel with 50MB bandwidth on the WAN link. Each site has an application delivery controller (F5 or NetScaler) acting as an load balancer and Global Server Load Balancer. 2 GSLB virtual servers exist one for external users and one for internal users both configured with site proximity. 2 VMware Access Points are deployed at each site load balanced using (F5 or NetScaler) acting as access gateways to each VDI environment. Again all of these components are deployed and configured independently at each site.

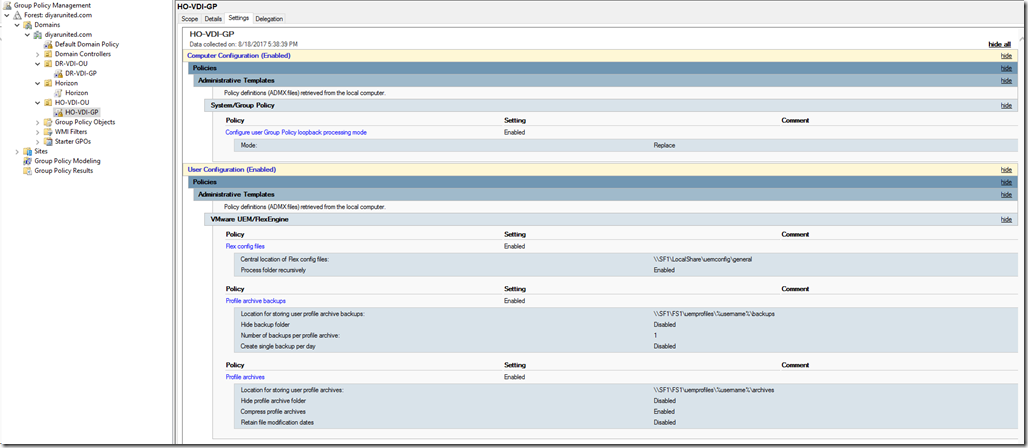

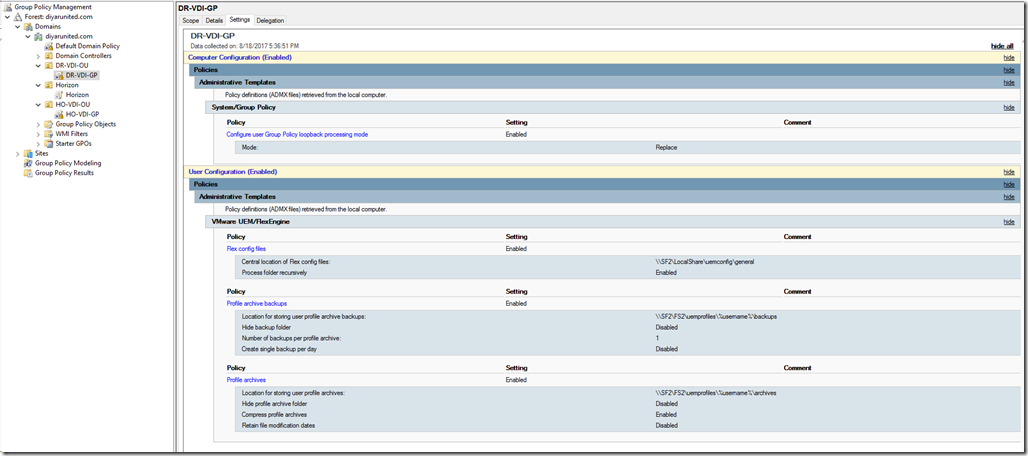

Each site hosts 2 load balanced connection servers, 1 composer server, 2 load balanced App Volumes/UEM mgmt. console. Each site has a pool of virtual desktops created from master images that were prepared in HO and replicated to DR to avoid preparing images twice, only base images are replicated never the less desktop pools are independently provisioned in each site to Different OUs (Organizational Units). Each OU has a group policy appointed to it that points VDI machines to the UEM configuration file share which is hosted in its local site independently. The same UEM and App Volumes can be used between sites which would require some additional configuration and SQL dependency but I am trying make it a simpler configuration that can be built upon so configure them as totally independent.

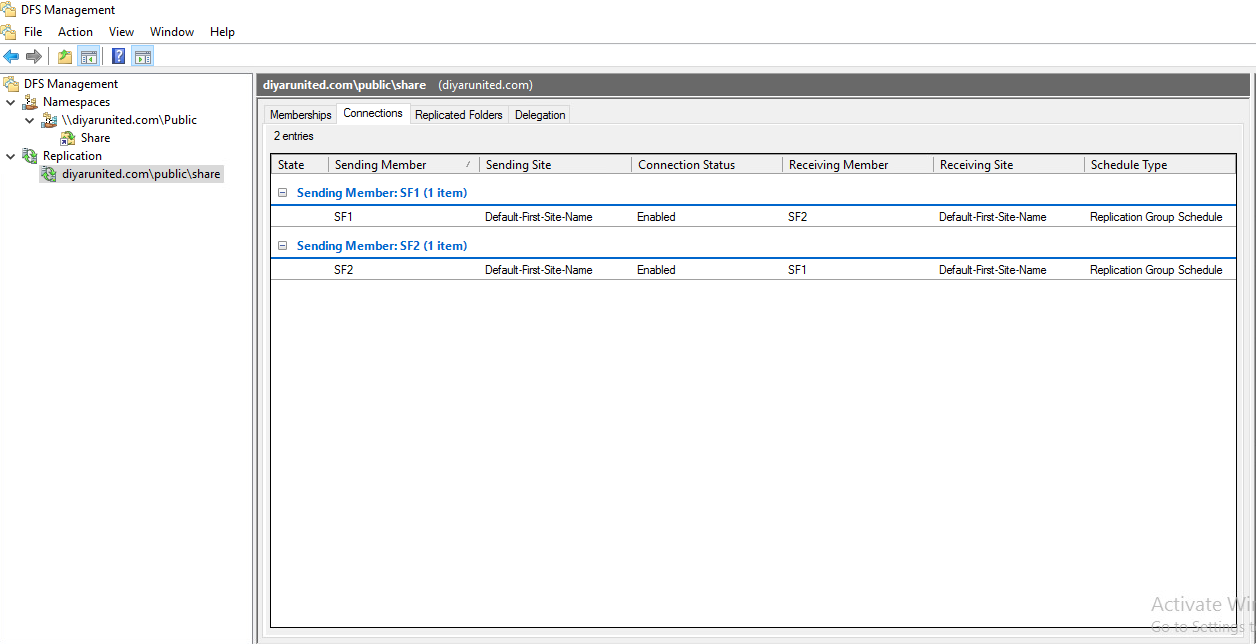

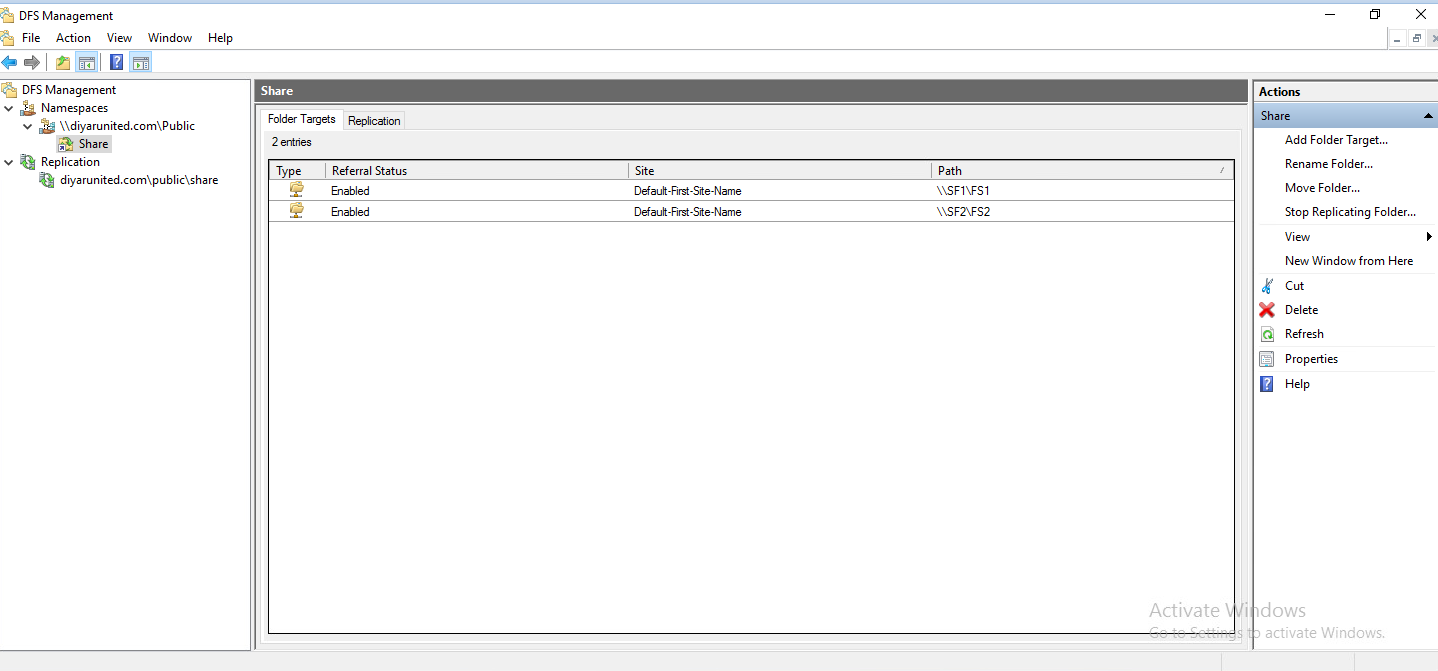

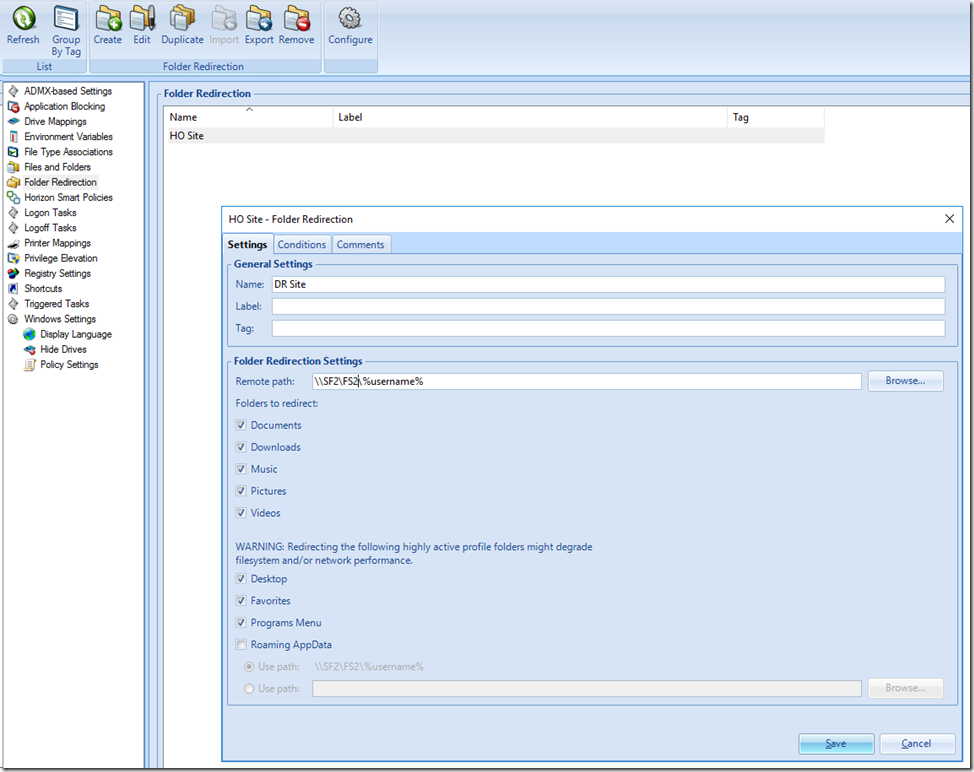

UEM Profiles and Data (Folder Redirection) folders in HO are being replicated through DFS-R to DR. This is the only shared configuration between both sites. In HO file cluster is named “SF1” and the share folder hosting UEM Profiles/Data is called “FS1”. In DR file cluster is named “SF2” and the share folder that is getting replicated Profiles/Data from HO is called “FS2”. The DFS-R namespace could be anything because we are not going to use it to connect to our folders , we just needed it to configure replication. Now we have 2 shares that are replicating from //SF1/FS1 in HO to //SF2/FS2 in DR which is holding user Profiles/Data. UEM configuration file share is not replicated only profiles and folder redirection data. Don’t forget Loopback processing …

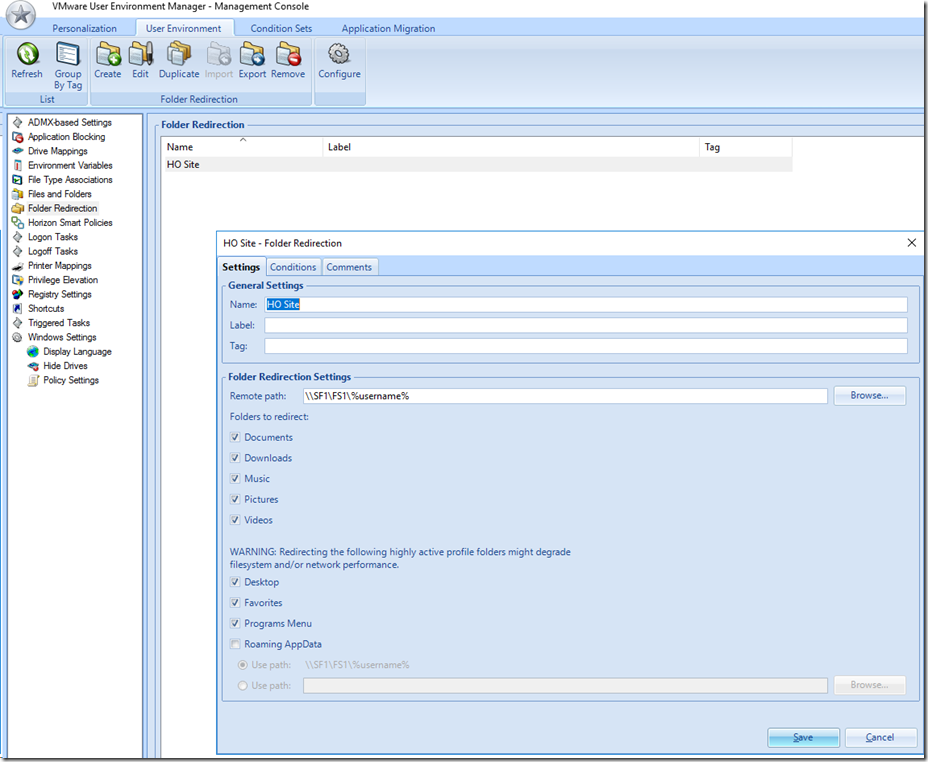

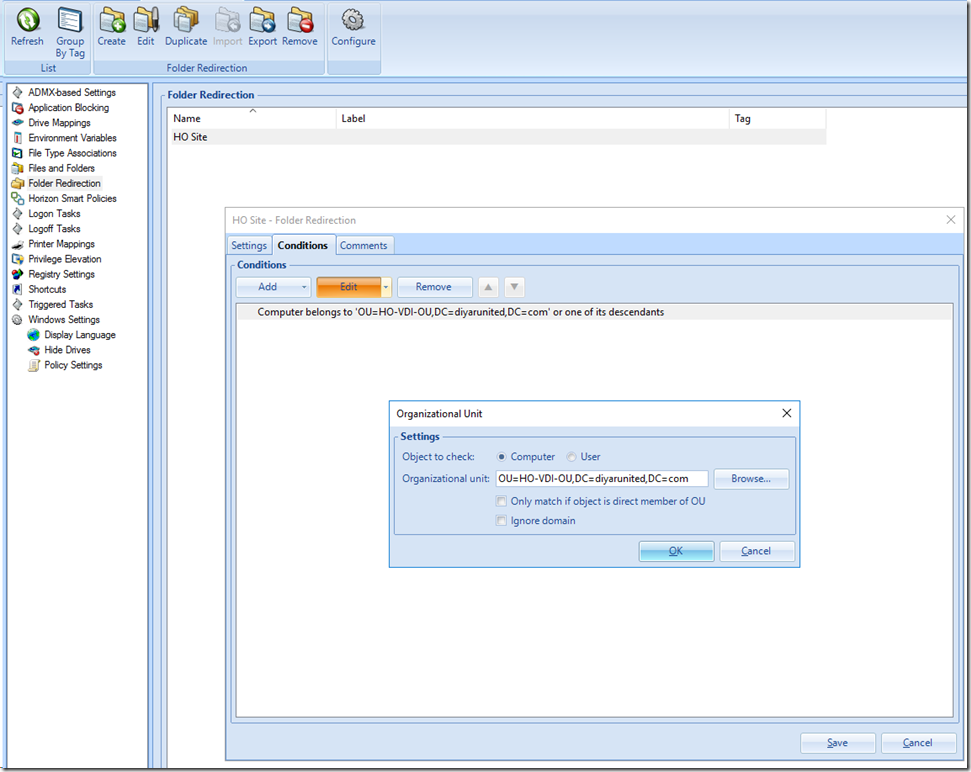

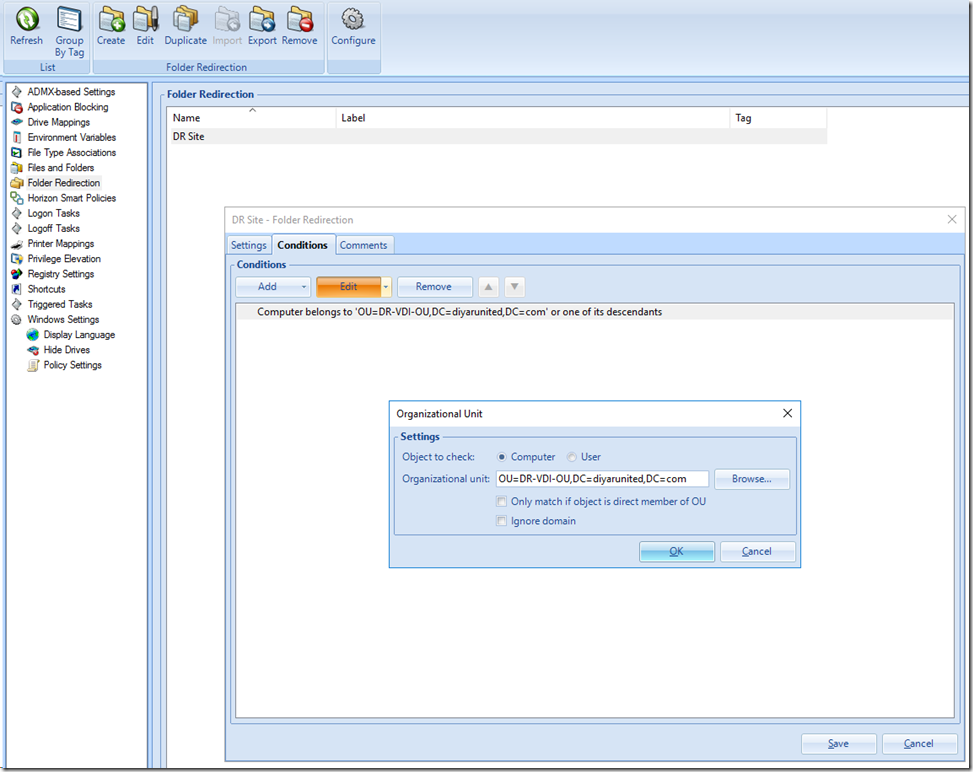

UEM in HO contains an User Environment Policy “Folder Redirection” which contains (Desktop, Documents, Favorites, ..) pointing to //SF1/FS1. This “Folder Redirection” Policy in HO has an condition configured which instructs UEM to apply this policy only when users are logging in to Computer accounts in the HO VDI OU.

UEM in DR contains an User Environment Policy “Folder Redirection” which contains (Desktop, Documents, Favorites, ..) pointing to //SF2/FS2. This “Folder Redirection” Policy in DR has an condition configured which instructs UEM to apply this policy only when users are logging in to Computer accounts in the DR VDI OU.

Horizon Pod Architecture is configured with Head Office Site and Disaster Recovery site added in the federation. Assign users to a global entitlement so that they can see only a single icon. Home sites can be assigned to users if they are fixed to one location. Now this drawing should make a bit more sense.

-

An external user logins to the external GSLB address of VDI environment.

-

Proximity Load Balancing points the user to the nearest geographical site.

-

The user launches a pooled or session based desktop.

-

While logging-in, UEM recognizes that the computer name is in the local site OU and applies the UEM Profile and Folder redirection policy pointing to the DFS-R share in the same site.

-

Any Profile/Data change by user is replicated to the second site file share using DFS-R.

-

In DFS-R last write always wins so even if the user logoff and is redirected to the second site, any change will also be replicated to the other site and so on ..

Remember that all we need for an active-active VDI multi site configuration is consistent user experience and data. The most important and honestly Only core requirement is for the user to see the same profile and data when logging to any site making it a seamless experience. All other components replicated or not, do not effect the user experience which is the ultimate goal of any VDI environment.

Considerations:

-

User must NOT NOT NOT have multiple sessions open from different sites at the same time since each site will point to the same replicated folder from its end thus causing corruption. We make sure of that in the above listed configuration never the less do note that DFS-R cannot handle multiple writes at the same time from two ends of the same replicated folder, that is why its not supported by Microsoft never the less 100% works when everything configured in-place makes sure user has open sessions pointing to one of the replicated folders.

-

Force the user to Logoff upon disconnection because if for some reason the user moves to another site locally , the user will be logged in to the nearest site which is different from where the session is currently open so what happens is he gets a new virtual desktop which loads the profile from the local share and so we have 2 open profiles on the same replicated folder from different sites which will corrupt the profile share DFS-R discussed earlier.

-

Depending on how users will connect and how often, it would be advisable that DFS-R replication is always-on and not scheduled ( This depends highly on environment variables and is not a core requirement ). Also make sure DFS-Replication is full mesh.

VMware Horizon 7 VDI Active-Active/Passive Multi-Site Disaster Recovery Part 2

Salam ![]() .

.

Great

Could the “GSLB virtual servers ” be replaced with a F5 GTM or BIG-IP DNS module solution?

Yes definitely they are exactly that but because someone might be using Citrix NetScaler or any other GSLB solution I tried to make it a generic description.

Great and useful document. Thanks

How does the vsan stretched cluster play into this, any advantages with it for this type of Active/Active config?

Hi Doug,

Definitely a VSAN stretched cluster plays a very big role in an Active-Active scenario because it mitigates having to rely on a replication mechanism for data/profiles which to me is biggest showstopper for this scenario, not only from a Microsoft supportability perspective but also from a technical point of view in terms of replication type (Sync/aSync), latency, and bandwidth. That is why I mentioned that a stretched cluster scenario from as storage and networking ( even better if L2 is extended ) has different considerations than sites dispersed having different storage devices and WAN links.

Is there a possibility to migrate the VM itself to the Passive site when we failover ?

Hi Avinash,

Lets start by stating that virtual desktop pools should can not be replicated let that be virtual desktops composed through composer or instant clones. Dedicated static virtual desktops can be migrated. All other servers can be migrated as long as standard DR migration considerations are considered such as IP change, maintaining same host names, connecting to the DR vCenter and recreating the pools and so on … My recommendation is always to build identical environments and just replicate the base images but that includes a bit of overhead since any change on any pool in HO must be also done in DR to maintain same experience in case of fail over.

What method are you using to replicate the base images from HO vCenter to the DR vCenter?

I am using vSphere Replication but you can use any hardware or software replication for the same.

Hi,

We are using F5 LTM/APM Configuration and currently only have one pod. Now we are planning to add another pod in a separate site. Can we accomplish cloud pod architecture without having a GSLB by having connection servers from both pods under the same Load balancer ? We are also using Folder redirection through AD GPO. Is there a way to accommodate it in a cloud pod architecture ? Also, in the article you mentioned that in DFS-R we cannot write data to both sites at the same time. But if we disable session collaboration in desktop pools, a desktop session will automatically terminated if we login from somewhere else. Not sure if I am missing something in this thought process. Thanks in advance.

Best Regards,

Hi, Is this separate site connected through dark fiber or or stretched by any means ? because that would change many things but despite that, I will assume you have a another site that is L3 or WAN connected and answer your questions accordingly. VMware does not support joining connection brokers from different sites together ( unless its a stretched site with L2 adjacency ) so that is not an option, you need to have independent connection brokers in every site and then join them by Cloud Pod Architecture which would enable global assignments aside from that CPA does not interfere with how the connection is brokered so GSLB is still a must in this case. The concept of DR in GPO is that you need 2 different sites with 2 different pools that sit in different OUs and each OU has a Group policy that points to the local DFS share which are replicated through DFSR thus if User 1 logs in to site 1 the profile is loaded from site 1 file server and if user 1 logs in to site 2 the profile is loaded from site 2 file server. There is no session collaboration in multi site, you have different sites with different pools ( different virtual desktops ) thus you open a completely new session , so you need to ensure that this user doesnt have a session open from each site at any given time so the same user should have a single session from either sites at same time. This all changes if you second site is stretched and you want to consider your VDI as just one pod.